As enterprises move from AI pilots to full deployment, a harder problem is coming into focus. The bottleneck isn’t compute or tooling. It’s judgment. Without it, AI doesn’t make organizations smarter. It just makes them faster at making bad decisions.

Most workforce strategies haven’t caught up. When companies talk about the human skills employees need to work alongside AI, the conversation tends to collapse into the same shortlist: critical thinking, creativity, collaboration, growth mindset and so on. These are useful concepts, but they’re too vague to act on. And they are far removed from how human-AI work actually plays out.

Imagine an agent that has already triaged three incidents by the time a team lead logs in. What does critical thinking look like at that moment? What does creativity mean when a workflow designer must rearchitect a customer support process that can escalate, hand off, and learn from every interaction? Terms like “creativity” and “critical thinking” aren’t useful because they just aren’t actionable. Enterprises can’t assess them, teach them, or track whether anyone is getting better at them.

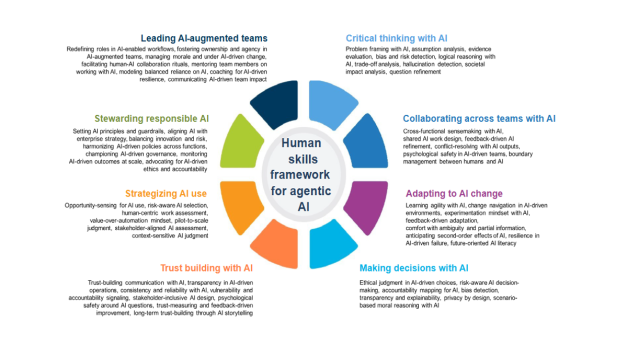

This is the gap that IDC’s new Human Skills Framework for Agentic AI is designed to address.

Breaking down the vague

The IDC Human Skills Framework for Agentic AI identifies eight clusters of human capability that organizations need to build now. Each cluster unpacks into specific, trainable subskills, the kind IT leaders can assess, teach and track.

Take critical thinking. The framework breaks it down into problem framing, assumption spotting, hallucination detection, trade-off analysis and metacognition with AI, which is the habit of asking whether AI is quietly shaping your conclusions in ways you haven’t noticed. These are more than just soft skills, or human skills. They are operational disciplines.

The same logic applies to decision-making with AI. Leaders need to be able to map accountability for AI-driven choices, recognize when outputs introduce disparate impact, and make privacy-by-design decisions before agents touch sensitive data. The framework calls these out as distinct, learnable behaviors. They matter because humans must remain in the lead. But if they lack the analytical judgement to know when to accept AI outputs or push back on them, AI becomes just a mechanism for making bad decisions faster.

Human judgement is a guardrail

Most organizations think of AI guardrails as a technical problem, something the model team or the platform vendor handles. The IDC framework pushes back on that assumption.

Human judgment is a guardrail. When an agent recommends a configuration change and a senior engineer signs off without questioning the logic chain, that’s a failure of critical thinking. It has nothing to do with the model. When a team can’t explain an AI-assisted decision to a skeptical regulator, that’s a trust-building failure, not a communications problem.

The framework is built on the premise that humans need to be explicitly trained to catch the things AI will miss. And they must do it consistently, not just when they happen to be paying attention.

The hybrid role gap

Organizations are already creating hybrid roles that sit across IT, operations and the business. IDC is seeing the rise of workflow orchestrators, risk monitors, human-agent collaboration leads. These roles typically get staffed with strong technologists. The problem is that the skills those roles actually require (facilitation, change management, cross-functional sensemaking, storytelling) often aren’t part of a technologist’s development path.

The framework gives HR and L&D leaders a map for closing that gap intentionally. Because it’s modular, it can plug into existing competency models rather than requiring organizations to start from scratch.

Saying no is a skill, too. Employees need the discipline to decline AI-driven solutions that are too risky, too irrelevant and don’t fit the context. That is a trainable behavior that appears in the framework under the strategizing AI use cluster, alongside opportunity-sensing and pilot-to-scale judgment.

The inclusion is deliberate. Organizations stuck in perpetual proof-of-concept mode, or chasing automation for automation’s sake, need leaders who can push back on bad AI ideas as clearly as they can champion good ones. That requires analytical judgment.

What to do with it

The framework isn’t meant to be read once and filed. IDC’s guidance for technology buyers, appended to the research, emphasizes keeping it alive. As AI agents grow more capable and new use cases emerge, the skills and training examples need to update alongside them. A static competency model will age out fast.

The practical starting point: map a small set of critical behaviors to specific AI use cases. Avoid long competency lists. Equip managers to coach employees on AI use day to day in the flow of work. That’s a lot more effective than just pointing people toward courses. Build cross-functional squads that experiment with AI workflows and share what they learn.

The central bet the framework makes is that organizations succeeding with agentic AI won’t be the ones with the most sophisticated models. They’ll be the ones whose people know what to do when the model gets it wrong.