The linear supply chain, which was optimized solely for cost, speed, and sequential handoffs, is over. In this model, if one link breaks, the entire chain comes to a halt, as there is no built-in redundancy or networked capability to navigate around the problem. As we look toward 2030, the key characteristic of successful operations is no longer just efficiency; it is intelligence at scale. This shift to an “ecosystem” or “network” model is critical for 2026 and beyond.

The last few years have served as a brutal stress test for legacy models, exposing structural fault lines that “optimization” can no longer hide. In late 2024 and throughout 2025, we witnessed a convergence of volatility that linear chains simply could not absorb.

Three specific industry failure modes have emerged from this period, signaling why a new direction is inevitable:

- The Tier-N Blindspot (The Visibility Gap): A major automotive manufacturer recently halted production when a climate event impacted a Tier 3 sub-component provider. Lacking multi-tier visibility, the planning team remained unaware of the risk until Tier 1 shipments ceased.

- The “Digital Tower of Babel” (The Interoperability Gap): During recent port congestions, manual handoffs between disparate systems prevented logistics networks from adapting, causing cascading delays. Agile firms pivoted instantly using open platforms while traditional operators remained trapped by disconnected data.

- The Expanded Attack Surface (The Security Gap): Rapidly increasing IT and OT connectivity without robust security has turned supply chain networks into prime targets for ransomware, cyber-physical attacks on IoT equipment, and AI-enabled attack vectors. Enterprises are deploying distributed, AI-driven systems to proactively neutralize risks from external partners to internal operations.

These are not isolated incidents; they are the growing pains of a sector in transition. They underscore why the next five years will not be defined by better silos, but by the dissolution of silos altogether.

These insights reflect IDC’s 2026 FutureScape: Worldwide Supply Chain and Industry Ecosystems research, which outlines the forces reshaping global operations and the capabilities leaders must prioritize. Explore the full predictions in the global report.

Three Trends Defining the Future of Supply Chain Ecosystems

Emerging from this volatility are three distinct trends that will define the path to 2026 and beyond.

1. Multi-Enterprise Orchestration: Visibility That Extends Beyond Boundaries

Disruptions now emerge across extended supplier tiers, logistics partners, and regional networks. Traditional visibility approaches anchored in ERP data and Tier 1 insights are no longer sufficient.

Supply chains must evolve into multi-enterprise networks that enable:

- Real-time visibility beyond Tier 1 suppliers

- Shared alerts and contextual intelligence among all partners

- Coordinated response actions across nodes

This shift moves visibility from a standalone tool to an integrated capability woven through planning, execution, and risk management.

As a result, IDC predicts(1):

By 2028, 50% of enterprise-scale supply chains will use business networks to enable n-tier visibility, serving as a key mechanism to reduce the impact of disruption and improve response speed by 25%.

Organizations that build this foundation gain faster detection, more accurate impact assessment, and greater confidence under volatility.

2. Supplier and Partner Ecosystems: Interoperability as a Performance Multiplier

The ability to work seamlessly across partner ecosystems will define future competitiveness. Interoperability, once a technology challenge, is now a strategic one.

Next-generation supply chains require platforms that:

- Integrate supplier, logistics, and customer systems with minimal friction.

- Support shared workflows, not just shared data.

- Enable AI agents to operate across organizational boundaries.

- Maintain consistent process logic, metrics, and governance across nodes.

As more partners connect to shared platforms, these networks become orchestrated ecosystems rather than loose collections of bilateral relationships.

As a result, IDC predicts:

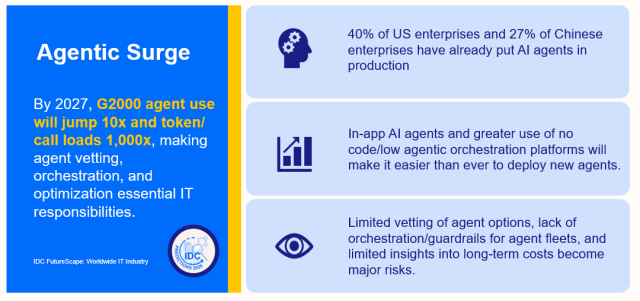

By 2029, 45% of G2000 companies will have adopted agentic AI–driven channel management and orchestration, driving a 20% revenue uplift and a 30% improvement in partner and customer satisfaction scores.

This interoperability amplifies agility: when market conditions shift, changes cascade across partners in hours, not months.

3. Data Foundations and Distributed AI-Driven Security: Trust at Ecosystem Scale

As supply chains become more interconnected, the surface area for cyber and data risk expands dramatically. At the same time, AI’s effectiveness depends on high-quality, secure, and interoperable data.

A modern supply chain must invest in:

- Federated data models enabling domain-level control with shared standards.

- Governance frameworks, ensuring consistent semantics, lineage, and quality.

- Distributed AI-driven security that continuously assesses ecosystem risk.

- Zero-trust principles applied across suppliers, platforms, and data flows.

Trust is no longer about internal compliance. It is about ensuring safe, reliable data movement across the entire network, because partner data is now operational data.

As a result, IDC predicts:

To secure supply chains, by 2030, 60% of large enterprises will deploy distributed AI-driven cybersecurity, enabling proactive third-party risk management as AI adoption intensifies cyber risks.

These foundations ensure AI-driven decisions are grounded in secure, high-integrity data flowing consistently across partners. Trust now means ensuring safe, reliable data movement across the network—partner data is operational data.

Future Imperatives for Operations and Supply Chain Leaders

The predictions point to one conclusion: supply chains must operate as intelligent, interconnected ecosystems. To lead in this environment, COOs and CSCOs should focus on five strategic imperatives anchored in the three core themes.

1. Transform N-Tier Visibility into Operating Infrastructure

Treat your supply chain as a system of systems. Visibility must shift from periodic reporting to a live intelligence layer that detects disruptions at their source, whether in a sub-tier supplier or a regional hub. Establish shared workflows and coordinated decision-making models to reduce blind spots and shorten recovery times.

2. Architect for Interoperability to Accelerate Execution

Shift from one-off integrations to platform-based ecosystems where suppliers, carriers, and manufacturers connect with minimal friction. When systems “speak” fluently, coordination becomes orchestration, leading to fewer handoffs, lower latency, and faster alignment under stress. Select platforms that enable partners to plug in without extensive customization.

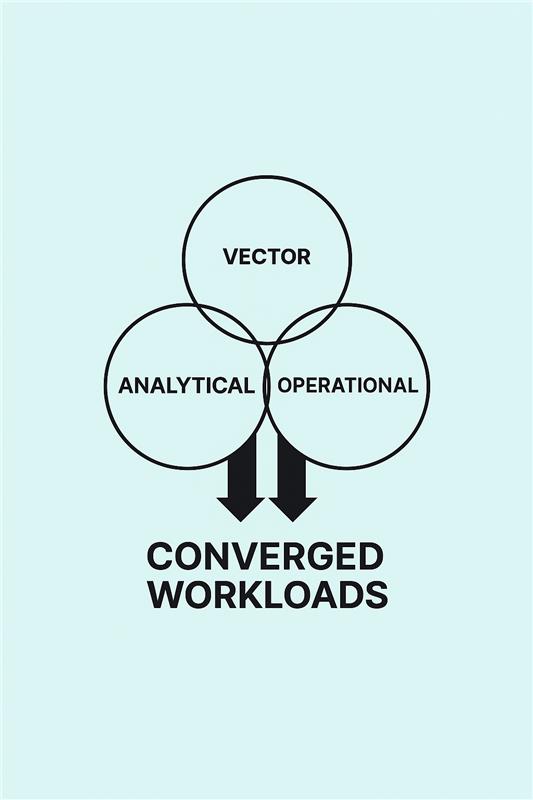

3. Treat Data Readiness as the Precursor to AI Scale

AI agents cannot scale without clean, governed, and interoperable data. Conduct a cross-functional audit of data availability and structure. Ensure that core datasets, including supplier, logistics, and product data, are aligned and secure. Data readiness is now AI readiness; without it, advanced capabilities like automated forecasting and risk sensing will fail.

4. Embed Distributed Security as a Resilience Pillar

As connectivity grows, security becomes the foundation that protects visibility and orchestration. Integrate third-party cyber assessments into supplier scorecards and deploy continuous monitoring tools. Adopt zero-trust principles across systems and data flows to detect anomalies early and maintain continuity even when threats emerge elsewhere in the network.

5. Leverage Ecosystem Intelligence for Value Beyond Productivity

Use interoperable platforms to enable new service models, dynamic capacity sharing, and sustainability-led optimization. Expand the definition of value to include resilience, customer trust, and ecosystem performance, turning the network itself into a competitive advantage.

The Leadership Mandate

Supply chains are becoming ecosystems. AI will accelerate this shift, but its value depends on network strength: visibility, interoperability, and data integrity.

Leaders must champion modernization that aligns partners, platforms, and data—core to strategic growth and operational continuity.

Investing in multi-enterprise orchestration, ecosystem interoperability, and AI-ready data foundations enables organizations to build responsive, resilient, future-ready supply chains.

Join Stephanie Krishnan for an upcoming webinar on 24 February 2026, 1:30 PM SGT on what agentic AI readiness means in Asia Pacific and how organizations can move from proof of concept to production responsibly. Register now!