For the past few years, large language models (LLMs) have dominated the AI conversation, and for good reason. They have transformed how we interact with software, accelerated content creation, and unlocked new forms of productivity.

But here is the reality enterprises need to internalize in 2026: the future of AI is no longer about a single model architecture.

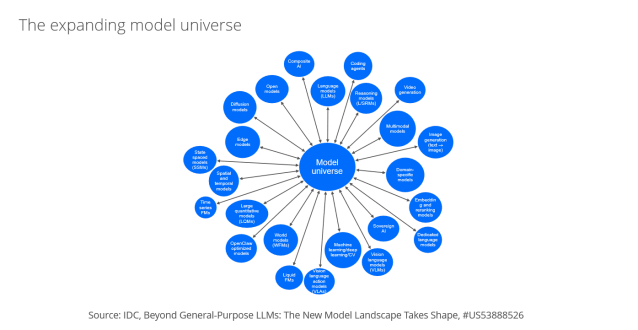

A new AI model ecosystem is rapidly taking shape, one that is more diverse, more specialized, and far more powerful when orchestrated correctly.

The shift is subtle, but its implications are massive.

What is replacing LLM-only AI strategies? The shift to “any-to-any” models

LLMs, largely built on transformer architectures, still play a central role. But they are no longer sufficient on their own. Across industries, we are seeing a more diverse model landscape emerge that includes:

- Deep reasoning models with adaptive thinking

- Multimodal models (text, image, video, audio)

- Small, efficient models for edge and latency- or cost-sensitive use cases

- Domain-specific models tailored to industries like healthcare, finance, and manufacturing

- Dedicated language models that excel in underrepresented languages

- Quantitative and physics-based models for scientific and simulation-heavy workloads and real-world problems, ultimately enabling physical AI

- Models with novel architectures beyond transformers, such as structured state space models (SSMs), mixture of experts (MoE), liquid neural networks (LLNs), and world models that solve problems differently, including time series and spatial tasks

- Vision-language models built on novel architectures

- Vision-action and world models that interact with real environments

- Autonomous AI agents that interact with the real world, search the web, manage knowledge work, and use tools

This expansion is happening because real-world problems are not purely linguistic, and many business requirements are not easily solved by transformer-based models. Enterprises are discovering that while LLMs “think in language,” many business problems require reasoning in numbers, space, time, and physics.

To truly accomplish work and enable action-oriented outcomes, models need different skill sets and must be trained on a more diverse corpus of data. Solving a business problem may require a combination of models optimized for both reasoning and execution.

Why multi-model AI matters for enterprise strategy

This is not just a technical evolution. It is a strategic one. Organizations that continue to treat AI as a single-model problem will quickly hit limitations in:

- Accuracy

- Cost efficiency

- Scalability

- Use case coverage

Meanwhile, those adopting a multi-model, multimodal, and multi-agent approach will unlock entirely new capabilities, including action-oriented AI.

How to build a multi-model AI strategy: Three actions to take now

1. Treat model selection as a core capability

Most enterprises still underinvest in model selection. That is a mistake. Choosing the right model is no longer a one-time decision. It is an ongoing enterprise capability involving:

- Matching model types to use cases

- Evaluating trade-offs (cost versus performance versus latency)

- Routing tasks dynamically across models

Action: Build internal processes or platforms for continuous model evaluation and benchmarking. Think of this like cloud cost optimization, but for AI.

2. Design for a “model portfolio,” not a single stack

Your future AI architecture will look less like a stack and more like a portfolio, with different models playing distinct roles. This includes general-purpose LLMs, models for large quantitative tasks, and vision-language models for generating video and other content. The possibilities are extensive.

This “constellation of models” is becoming the new normal.

Action: Start mapping your top 10 AI use cases and identify where different model types could outperform a single LLM approach.

3. Invest in AI-ready data infrastructure

As models diversify, data becomes the unifying layer. Without the right data foundation, even the best models will underperform. The shift toward a multi-model world is driving a major evolution in data platforms, including:

- Converged data architectures

- Real-time pipelines

- Vector and multimodal databases

Action: Prioritize data readiness, including governance, pipelines, and accessibility across model types.

Final thought: AI success will depend on model orchestration

The organizations that win will be those that:

- Embrace model diversity

- Build flexible AI architectures that can quickly incorporate new model types and innovations

- Invest in AI-ready data architecture and orchestration

Because in the new AI landscape, it is not about having the best model. It is about having the right combination of models.

Learn more

For a deeper dive into how the model ecosystem is evolving and what it means for enterprise strategy, explore IDC’s latest research on AI model landscapes and emerging architectures.