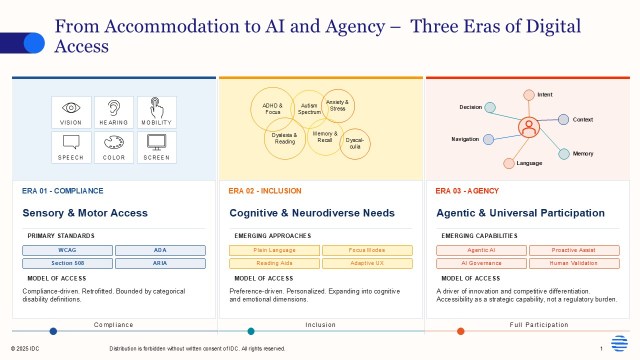

For years, digital accessibility, the practice of ensuring that digital products and services can be perceived, understood, and used by everyone regardless of ability, was treated as a compliance checkbox. That framing is no longer adequate. AI is reshaping accessibility into a strategic capability, one that is adaptive, continuous, and embedded in how people work, interact, and innovate.

As AI-enabled work becomes the norm, accessibility is no longer about supporting a small subset of users. It is about ensuring that everyone, across physical, sensory, cognitive, and neurodiverse dimensions, can fully participate in increasingly digital and AI-mediated environments. In this context, accessibility becomes foundational to productivity, inclusion, and ultimately business performance. Accessibility is also part of company culture: involving disabled and neurodiverse individuals in co-design, not just testing, creates more robust and adaptable systems. Sustaining long-term impact also requires investment in skills and culture, training employees, fostering inclusive design practices, and making accessibility a shared responsibility across teams.

The opportunity: AI as a scaler of inclusion and innovation

AI introduces a powerful opportunity to rethink accessibility at scale.

First, it enables real-time content adaptation. Capabilities such as automatic captioning, transcription, translation, and alternative text generation allow organizations to dynamically tailor content to different user needs. AI can also adjust reading levels, restructure complex information, and personalize interaction styles, supporting a broader range of cognitive and sensory preferences.

Second, AI supports continuous accessibility operations. Traditionally, accessibility has relied on periodic audits and remediation efforts. AI-driven testing tools now allow organizations to embed accessibility checks directly into development pipelines, transforming accessibility into a continuous, iterative process aligned with DevOps cycles.

Third, AI helps democratize innovation. By making tools and workflows more accessible, organizations can engage a wider and more diverse talent pool, including neurodiverse individuals and those historically underserved by traditional work environments. This expands creative input, improves problem-solving, and strengthens organizational resilience.

Finally, AI enables data-driven accessibility insights. Organizations can use AI to analyze accessibility barriers, monitor usage patterns, and measure outcomes, linking accessibility directly to business metrics such as productivity, employee engagement, and customer satisfaction.

Taken together, these capabilities shift accessibility from a cost center to a driver of innovation and competitive differentiation.

The pitfalls: Bias, complexity, and the risk of scaling barriers

Despite its promise, AI also introduces significant risks that organizations must actively manage.

One of the most critical challenges is bias in AI models. Many AI systems are trained on data and designed by teams that lack diversity. This can result in outputs that unintentionally exclude or disadvantage certain groups, particularly people with disabilities or non-standard interaction patterns. Without deliberate inclusion in design and testing, AI can reinforce existing barriers or create entirely new ones. Feedback loops that combine AI-driven insights with real user experiences are essential to countering this risk.

Another risk lies in inaccessible AI-generated content. While generative AI can produce fluent and polished outputs, these may still fail accessibility standards through improper structure, missing semantic cues, or formats that are difficult for assistive technologies to interpret. Auto-generated captions, for example, are often not accurate enough for compliance purposes.

The rise of agentic AI systems (autonomous AI that acts across workflows and applications without direct human instruction at each step) adds further complexity. If poorly designed, they can propagate inaccessible processes at scale, embedding friction into core operations rather than eliminating it.

There is also a governance challenge. As AI becomes embedded across systems, organizations must ensure clear accountability, transparency, and control over how accessibility preferences are handled, how decisions are made, and how user data is used.

Perhaps most importantly, over-reliance on automation can create a false sense of security. AI can scale testing and detection, but human validation, especially by people with disabilities, remains essential to identifying real-world usability issues.

Recommendations: Turning intent into impact

Organizations that want to lead in AI-enabled accessibility should focus on four key actions:

- Prioritize accessibility as a design principle. Move from reactive compliance to proactive, accessible-by-design systems embedded in AI-enabled platforms and services.

- Establish proactive AI accessibility governance. Integrate accessibility into AI governance frameworks early, ensuring inclusive workflows and avoiding costly retrofits.

- Design for workforce adaptability and inclusion. Extend accessibility strategies beyond compliance to support diverse employee needs, including neurodiversity, aging workforces, and varying cognitive styles.

- Act early to mitigate risk and maximize value. Early investment reduces remediation costs, strengthens trust, and positions accessibility as a strategic differentiator rather than a regulatory burden.

AI is redefining digital accessibility as a core element of how organizations operate, innovate, and compete. Those that embrace accessibility as a strategic priority will not only meet regulatory requirements but also unlock broader talent, improve user experiences, and build more resilient AI systems.