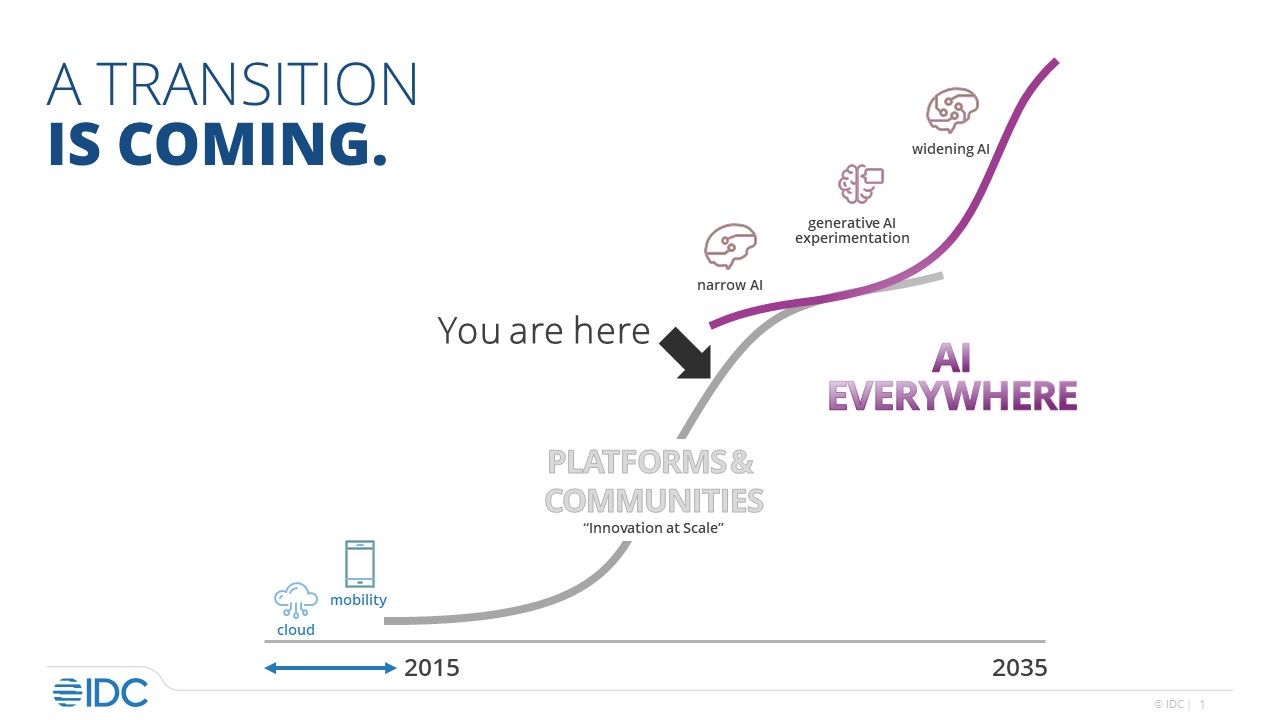

The Canadian tech industry has been experiencing a significant transformation in recent years, driven by market destabilizing events, the rise of cloud computing, and the emergence of traditional infrastructure as a service (IaaS).

In light of these changes, IDC Research has conducted a special study titled “Canadian Channel Study in Transition 2022” to explore the role of channel partnerships in the Canadian tech landscape. The study reveals a vibrant and dynamic channel ecosystem can provide significant business growth opportunities. In contrast, a stagnant channel poses challenges to the industry’s growth.

The report sheds light on the Canadian channel partner ecosystem, encompassing various solution partners serving multiple industries. The report reveals that Canadian channel partners are now 150% more involved in providing digital transformation solutions than before the COVID-19 pandemic. This blog explores the top trends shaping the Canadian Channel and key findings from this report.

Top Trends Reshaping the Canadian Channel Partner Approach for Business

IDC has watched several trends unfold in the Canadian channel partner ecosystem since 2015, when digital transformation started to take hold in large organizations. Canadian sales, service, and delivery partners of technology vendors sought ways to add value to clients, drive revenue generation, and develop new capabilities to enhance their profitability.

Numerous trends reshaped the strategies and plans of these individual businesses:

- A technology shift to cloud services.

- The primary business activity shifted from resale to services, sales motion, and buyer trends to focus on use cases that mattered to C-Suite buyers.

- And inter-company collaboration from a competitive mindset to connected ecosystem co-opetition.

- An increase in merger and acquisition activity in the channel as companies made strategic moves to meet market and client demand. Specialized players joined forces to scale up operations while publicly traded IT providers consolidated independent entities. CentriLogic and Carbon60 and large services firms like KPMG and Accenture, or publicly traded IT providers like Converge Technology Solutions and Insight consolidated. These M&A activities are reshaping the landscape, creating new opportunities for collaboration and innovation.

- A shift towards on-demand or as-a-service infrastructure embraced by both buyers and sellers, recognizing the financial and technological benefits it offers

What is the Pulse of the Canadian Channel?

We surveyed 203 companies in the Canadian channel and examined emerging topics and topics that were observed in similar studies in 2016, 2017, and 2019. After analyzing the data, we looked for examples of Canadian channel players that illustrated trends we saw using IDC’s proprietary Channel Partner Ecosystem (CPE) database

The CPE database provides a comprehensive look at IT vendors, partners of IT vendors, and the entire channel partner ecosystem. It displays the links between IT vendor partners highlighting the technological areas they cover, the markets they service, geographic location data, and much more. As a result, this ecosystem depicts information on the service, solution, and geographic reach of a channel partner.

The CPE database contains information on more than 250,000 partners of 2,000+ technology vendors and identifies 1,000,000+ network relationships. Among the many vendors covered are Adobe, Amazon Web Services, Autodesk, Cisco, Citrix, Dell Technologies, Google, Hitachi, HP Inc., IBM, Intel, Intuit, Juniper, Micro Focus, Microsoft, NetApp, Oracle, Palo Alto Networks, Red Hat, Salesforce.com, SAP, SAS, ServiceNow, Siemens, Symantec, and VMware.

The database covers a wide area geographically with data from 100+ countries such as Australia, Canada, France, Germany, Hong Kong, Japan, the rest of Asia/Pacific, CEE, Latin America, the Middle East and Africa, Russia, the United Kingdom and the United States.

The health of the channel is a barometer for the health of the Canadian technology industry. Simplifying and de-risking IT decision-making is critical to channel partners’ success.”

Key findings from the Canadian Channel in Transition study include:

- The revenue percentage from resale products and services for the typical channel partner in Canada has remained the same post-COVID (47%) as it was pre-COVID (48%). In contrast, recurring revenues have become a bigger slice of revenue – growing from 29% in 2019 to 42% in 2022 for the typical channel partner.

- In 2022, the typical channel partner derived 35% of revenue from digital technologies – up from 18% pre-COVID in 2019.

- MSPs, SIs, and ISVs generate more revenue from Large/Enterprise companies. Resellers have a more balanced revenue mix from SMBs and LE.

- Over 85% of the partnerships exist in the “undeclared” or under-the-surface areas (see Figure 1). It would be difficult for the technology supplier to find the appropriate partner. To alleviate the problem of selecting the correct partner, IDC’s CPE database offers insight into partnerships that are not readily apparent or disclosed. It helps in going beyond the top-tier partnerships to identify what is under the surface.

- Canadian channel partners are now 150% more involved in providing digital transformation solutions than before the COVID-19 pandemic.

FIGURE 1: IDC’s CPE Value — Finding Visible and Nonvisible Partners

As the Canadian economy emerges from the pandemic, the health of the channel becomes a barometer for the health of the technology industry. By embracing digital transformation and forming strategic alliances, channel partners can play a pivotal role in driving growth, innovation, and disruption across various sectors.

The Canadian Channel Study in Transition 2022 findings underline the need for businesses to recognize the value of channel partnerships and invest in fostering collaborative relationships to thrive in the rapidly changing Canadian tech landscape.

Ready to unlock the power of strategic channel partnerships? Gain exclusive access to the Canadian Channel Study in Transition or schedule a call with Jason Bremner to learn more about this study.