As an emerging tech vendor, you know that it can be a struggle to use content marketing to create brand awareness. Researching and creating a compelling piece that will get the attention of your target audience takes a lot of research and time. Fortunately, there is an easy way to satisfy the need for content your audience craves: third-party content.

Third-party content is a form of content creation that exists in working with a credible, external source that will prepare a piece of thought-leadership for you. Let’s have a look at the advantages of third-party content and how to best use it.

Build Trust with Third-Party Content

Working with a well-known, established partner will not only give you ready-made content, but will also help build trust in your brand’s expertise, expand your reach, and raise awareness in a noisy market.

Did you know that on average, tech buyers download six pieces of content throughout the tech purchase process? With an increasing number of content and channels to choose from, they naturally prefer content from a trusted, well-known source. In fact, as many as 95% of tech buyers recommend vendors add more insight from industry thought leaders and analysts to improve their content.

Working with an established source like an analyst can create a piece of thought-leadership that elevates your brand’s position in the market and builds a foundation of trust and credibility.

Prepare, Prepare, Prepare

Third-party partners will work with you to create content that is closely aligned with your marketing objectives. To get the best out of your new content piece, you must do your homework before briefing your partner.

- Which topic and keywords resonate most with your audience?

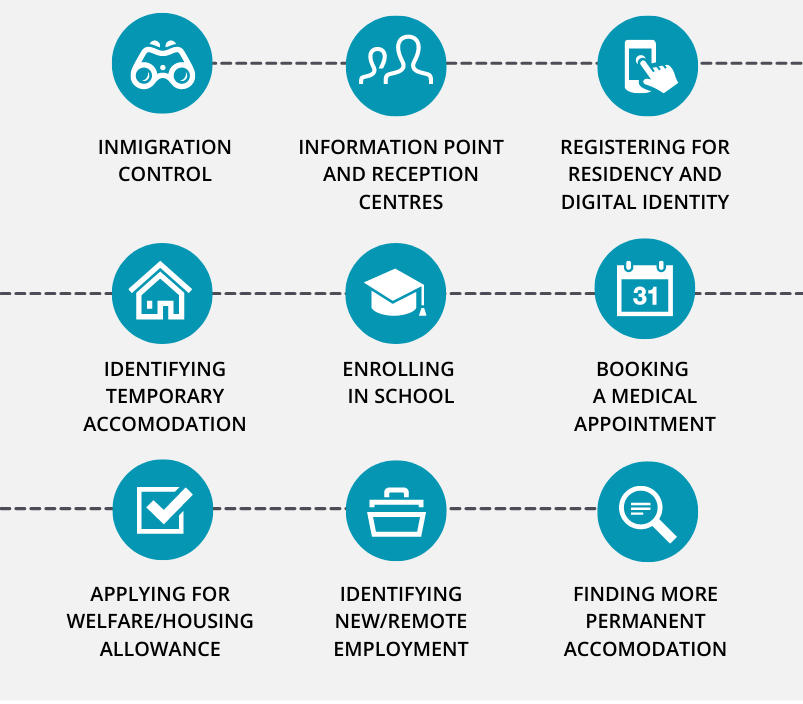

- Which style of research will best serve you?

- What’s the most compelling visualization that should accompany the piece of content?

Remember that you should keep in touch with your third-party representative during the creation of the piece. If your external partner has dedicated Success Managers, they will ensure you will have guidance from preparation to implementation.

Share

You might be tempted to take your new piece of content and publish it on all your marketing channels. This could lead to a spike in interest for your company. But it could also be the wrong channel for the message you are sending or even the wrong time (holiday season, anyone?).

We recommend you keep these tips in mind when marketing your third-party piece:

Strategy: don’t use your content at the same time on every channel. You could start by publishing it on your website and social media, use it in a blog post in the following months, then refer to it in a digital discussion on LinkedIn. The possibilities are endless. Our new eBook includes a handy checklist for your next marketing campaign.

Be Insightful

When you share your third-party content, you don’t want to simply post the content and add a generic message like “Good read!”.

Instead, you want to personalize your message to your audience and tell them why this is compelling information for them, and what the net gains from reading are. We are all busy and suffering from content overload and adding this bit of information makes you stand out from the crowd.

Avoid Marketing Pitfalls

With a little bit of savvy marketing, a great piece of third-party content will create awareness and credibility. But failing to establish some details before the launch of your campaign will quickly lead to a loss of ROI.

Make sure you

- Are clear about your KPIs. What does success look like for this campaign? And for your company?

- Be clear about your digital strategy and develop a concise marketing plan.

- Track your success and refine constantly.

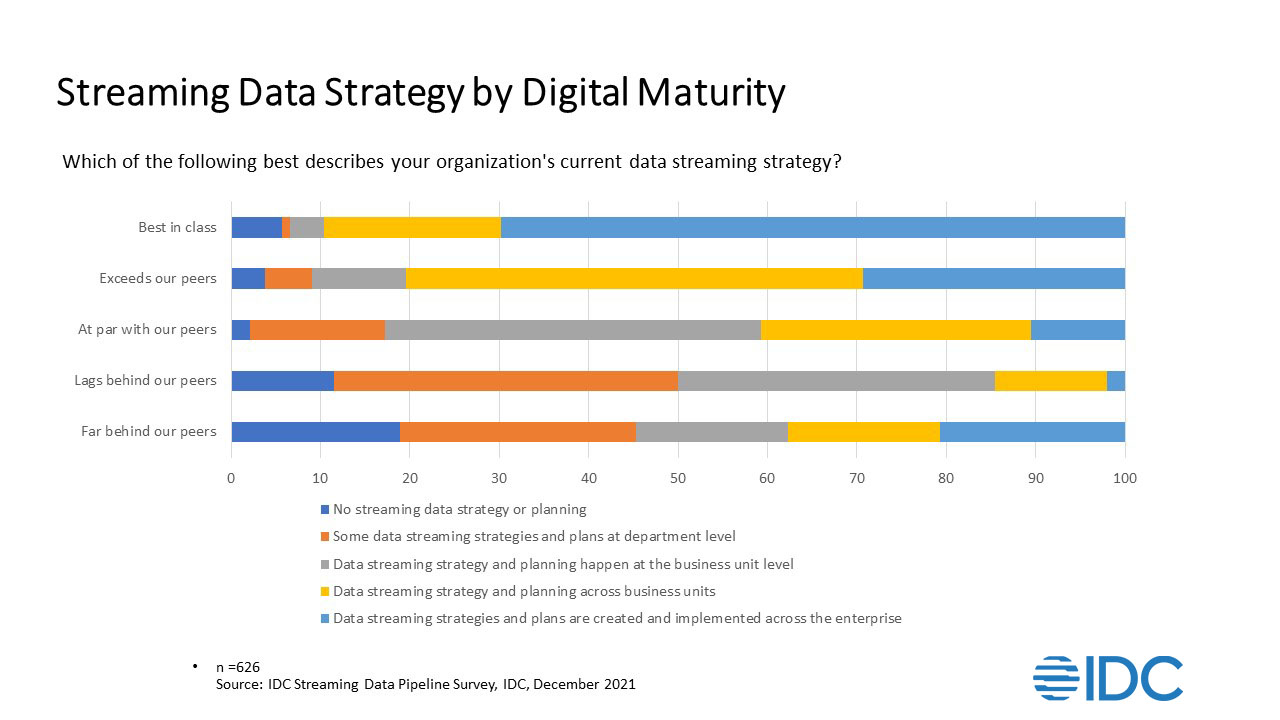

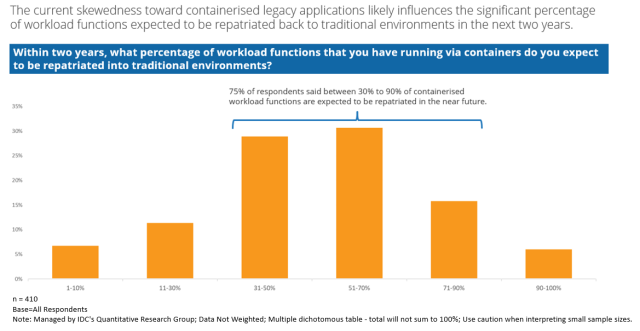

If you find these insights helpful and are looking for a partner, we might have the solution. IDC’s Thought Leadership Analyst Brief provides leading-edge third-party quality content that elevates your brand image and associates your company with emerging technology trends, driving your global media coverage and market awareness, and re-defining your client dialogue.