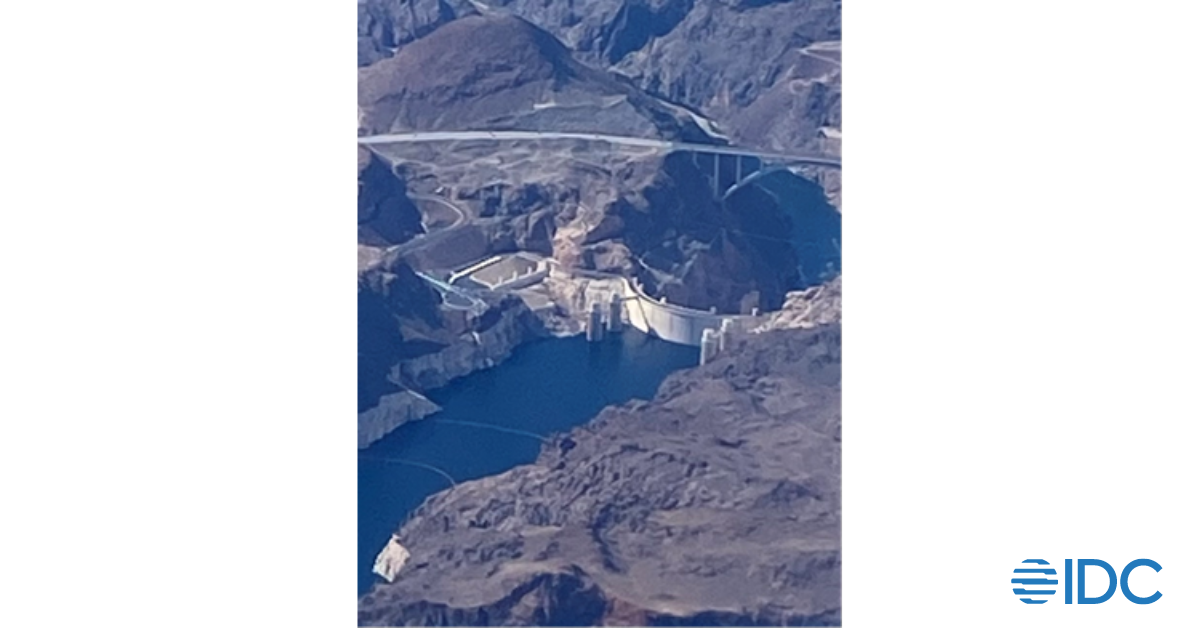

The plane ride to Shoptalk 2024 was a precursor of what was to come – a bit of a hike for a New Englander – the trip to Las Vegas took over 6 hours. Looking out my window seat, I witnessed the Hoover Dam and the accompanying Mike O’Callaghan-Pat Tillman arch bridge spanning the outcroppings in front of the dam.

Just like the bridge, the conference presented the opportunity to reach across the chasm and connect with retailers, vendors, and professionals in retail. Without doubt, it fulfilled this purpose.

The event drew about 10,000 people across 3 days with a substantial expo floor and educational sessions. The event opened with Sophie Wawro, President of Shoptalk and Mike Antonecchia, SVP discussing the focus of Shoptalk, standing on a sparkling stage that seemed right out of the Twilight Zone.

The duo mapped out the day’s events and agenda and opened the stage to the keynote speakers including Colleen Aubry from Amazon and Tony Spring, CEO at Macy’s Inc. with additional thoughts about Reimagining Retail from Gina Boswell, CEO at Bath & Body Works. The conference had broadened its scope from prior years. More specifically, Shoptalk, better known for their digital focus had adopted a track for Merchandising, Assortment and Supply Chain – entering deeper into the application of technology for the merchandising sector. Clearly, merchandising continues to be a hot topic for retail. We discuss some takeaways below.

The event is well known for it’s 1:1 meeting formats, and frankly it felt like speed dating to get to know people in the industry. Shoptalk had refined their meetings from prior years – and most importantly, made it a much smoother, straightforward process with announcements and a pair of gigantic digital clocks at either end of the Shoptalk meeting floor, making sure we kept to time. The tables were tiny, two-seaters you’d find in a cafe, but perfect for a quick conversation. As with any large event, as soon as the ‘voice of God’ announcements were made, decibel levels grew from a murmur to hundreds of conversations all at once, echoing off the walls of the Las Vegas Mandalay Bay event halls. However, I will say, most of these meetings were interesting enough to sit through the 15 minutes of introductions and beginnings of a conversation – which was the intent.

The diversity of meetings included large retailers like Macy’s and Sainsburys but also ecommerce firms like Peapod, independent consultants, and fellow Rethink Retail Top Retail Experts (you know who you are!) Being in the industry for decades, not everyone was a new face. The folks whom I knew offered reinforcement of new research topics such as Retail Media or Generative AI and others focused on my passion for Merchandising and Analytics. Some of the new folks I met surprised with future of retail conversations to both learn and understand their perspectives. Expanding my horizons was the most rewarding part.

Beyond the speed meeting rituals, we had rounds with top retail vendors that continuously make inroads in the market. Part of the view included exciting meetings with Oracle in a Starbucks, innovation meetings with Blue Yonder at their booth, touching base with Zebra and their focused expansion into merchandise planning, and also speaking with Centric Software, an interesting new add in to my merchandising and planning perspective.

I can’t leave out Bazaarvoice who hosted a fantastic post-dinner engagement with deep conversations about retail marketing and the media angle. Other productive engagements included conversations with CDP firm Twilio about retail data.

Shoptalk 2024 enabled a number of tracks, but my limited availability required that I focus on merchandising, assortment and supply chain with a small selection of education tracks. The most exciting session I attended was an interview with Aimee Bayer-Thomas, Chief Supply Chain Officer at Ulta Beauty. Key learnings from this session revolved around the impact and perspective of technology in terms of supply chain:

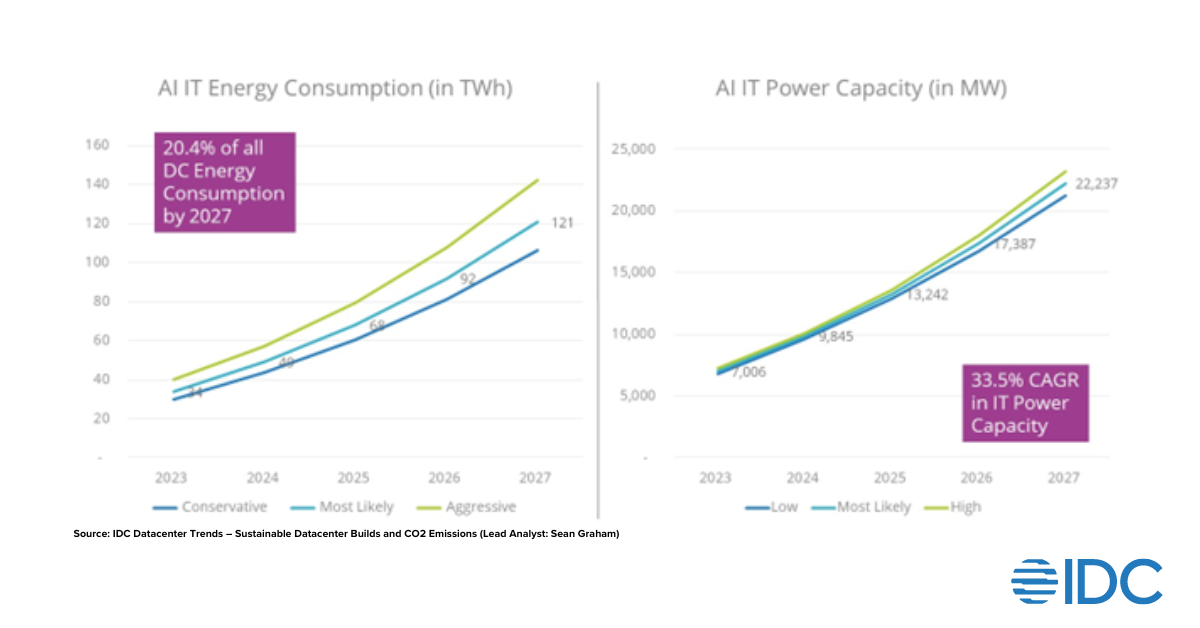

- Tech is needed in retail: While Ms Bayer-Thomas highlighted this fact, it is not by chance that retailers adopt technology at a rapid clip. Overall spending for IT spending in retail has grown at a pace of over 5.5% YOY, with expectations to grow to over 8% by 2027 (Source: IDC Data). For instance, Walmart incurred capital expenditures of almost $12 Billion on supply chain, customer facing initiatives and technology in just the United States a growth of 28.4% over Fiscal Year 2023. Other large retailers are showing similar investments in technology innovation with companies like Home Depot investing $150 million into a venture capital fund in 2022. Target announced $4 to $5 billion of investment in 2023 “to expand its guest-centric services, operations network of stores and supply chain facilities, digital experiences and other capabilities.” Clearly, the investment in technology isn’t slowing down, and retailers continue to leverage a critical part of technology for supply chain and merchandising.

- Tech is an enabler: Also focused on during Shoptalk’s session, Ulta Beauty leverages tech to enable outcomes. According to Ms. Bayer-Thomas, “Tech must improve strategic imperatives.” The purpose of technology is to improve and optimize the ability for distribution centers and micro-fulfillment centers within the organizations to operate more efficiently and smoothly. Technology must service the end goals of these very different types of supply chain facilities that must work together in concert to yield the best results for retail.

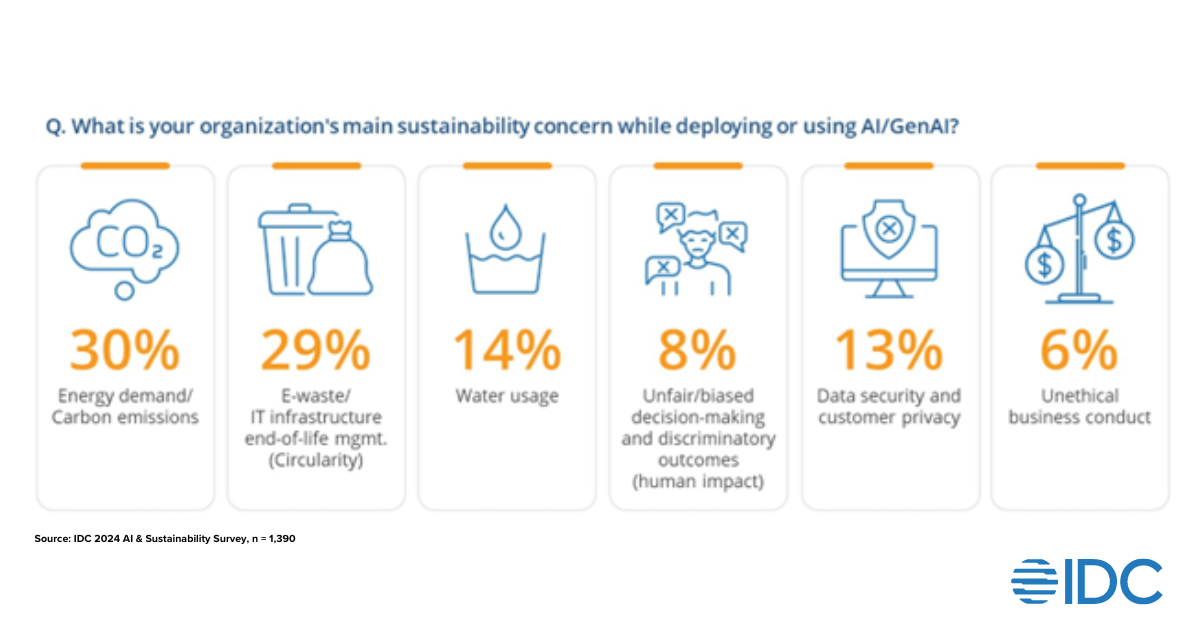

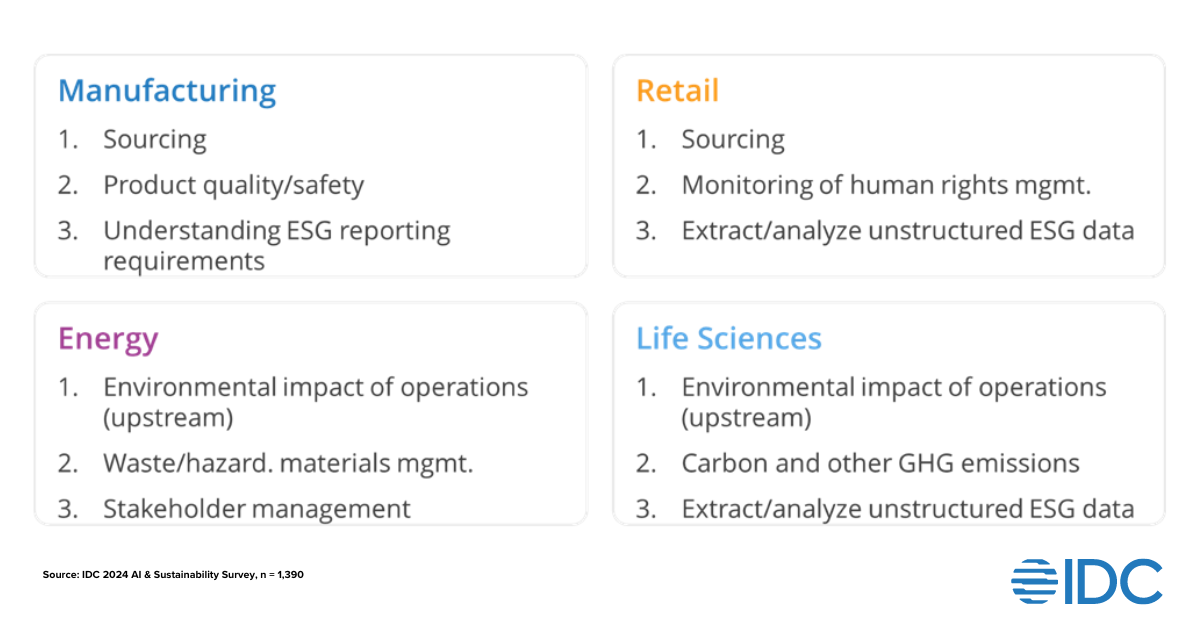

- Tech must fit the business: Not all technology is appropriate, and the criticality of the supply chain combined with the need to service the business means that any technology used must proactively fit business needs. Ulta Beauty is balancing the technology with investments in people, and for the most part must ensure that there is an active tradeoff in new technologies that will result in leaving the company merchandising and supply chain better off than without the tech. Some technologies are worth examining however. For instance, the growth of AI in retail since the launch of ChatGPT in the public arena has been exponential. Per Ms. Bayer-Thomas, “AI is something we’re watching”. More specifically, retailers are looking at AI for supply chain use cases, testing applications, and piloting AI capabilities across their organizations. Not all of these efforts coincide with supply chain or merchandising, but some highly interesting use cases have arisen. Find out more about such opportunities here: Understanding the Generative AI Use Case Landscape: The Industry Perspective

As a retailer, following these guidelines are mandatory. The rapidly changing world of technology intersects with the high performing retail world creating conditions where retailers must invest in technology. Doing this correctly will mean the difference between successful applications of technology and failed projects that missed the mark. Technology must address a business need and not be pursued for technology’s sake.

Aimee Bayer-Thomas, Chief Supply Chain Officer, Ulta BeautyTech must improve strategic imperatives.

Any gathering of retailers, retail vendors, and a mix of adjacent professionals can be an opportunity for business value creation – but the most significant events proactively bring people together. Shoptalk 2024 is one of these avenues where business creation happens. Tagged as one of the top retail events, my experience at Shoptalk 2024 was the right kind of nudge, especially in understanding the criticality and balance required for applying technology to merchandising and supply chain. The event addressed many of the critical challenges in retail through content, people, and engagement with industry players.