After a year of disruptions like high inflation, war, geopolitical tension, energy shocks, and the anticipation of recession in major countries, it’s no surprise that IT leaders entered 2023 with a mission to minimize technology investments and develop plans for executing spending cuts if conditions worsened. Despite the uncertainty, their teams were running full tilt, filling open positions and “catching up” with the business.

Then along came ChatGPT. Suddenly, IT leaders find themselves planning for the coming Artificial Intelligence (AI) onslaught and asking, “Are we prepared?”

The Threat of IT Malaise

For the first few months of 2023, IDC noted that economic and IT spending outlooks of IT leaders in our monthly Future Enterprise Resiliency & Spending surveys began to improve. IT supply chains loosened, China reopened, energy shocks failed to develop, and the recession continued to be a worry for the future, not a reality of today.

In March, the Silicon Valley Bank failure, a series of banking problems, and concerns about a US debt default canceled out much of the growing economic optimism in the US and Europe, but not in Asia Pacific countries. A more troubling new concern that IDC heard from IT leaders starting in May was that the continued “waiting for recession” is starting to affect economic and IT investment assumptions for 2024, not just 2023.

It became easy to conclude that CIOs and IT leaders should be hunkering down to ride out an extended period of economic uncertainty and IT malaise, focusing on constraining new expenditures and optimizing the use of existing assets. While sustaining efforts to establish cloud economic practices is important, it’s no longer the top priority. Now is the time to start preparing for AI Everywhere.

Innovation Beyond IT Is a Rejuvenator, but Creates Disruption

Leveraging technology to drive innovation in our daily lives has always been a key expansion driver for the entire IT industry since the start of the computer and digital communications eras of the 1950s. The most significant technology driven transformations, such as the advent of the Internet/Web and the launch of the smart phone/cloud era, occurred at times when economic conditions were uncertain, and questions were being raised about the marginal utility of new IT investments.

In both cases, individual consumers and business leaders “got ahead” of IT leaders, driving long term fundamental changes in IT architectures and the role of IT organizations. Because IT leaders were unprepared, most organizations found themselves following a “Fire, Aim, Ready” pattern resulting in unnecessary duplication of work and data/application fragmentation. Those IT organizations that were prepared in advance, executing a “Ready, Aim, Fire” strategy, emerged as the leaders in the new wave.

Generative AI Is the New Trigger but Requires Preparation Now

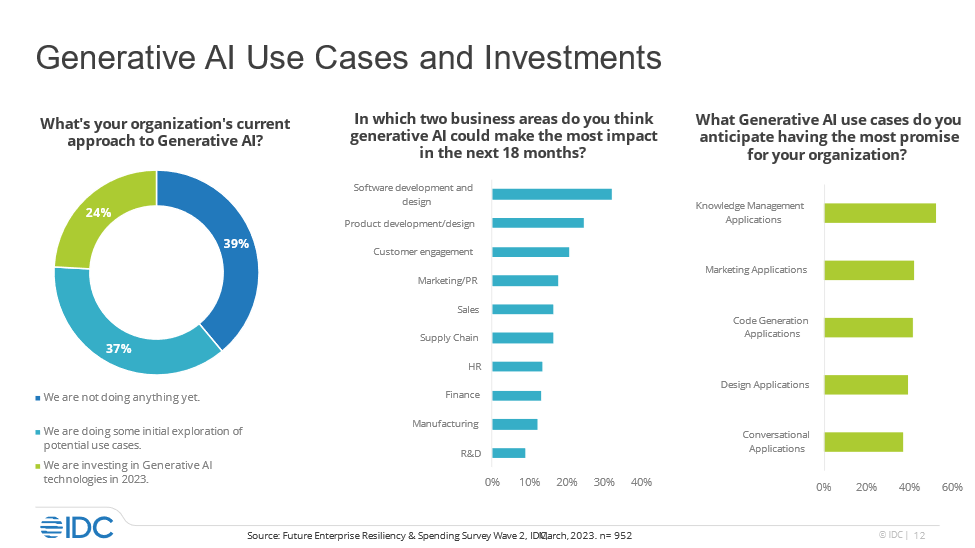

In this time of “perceived” IT malaise, the emergence of generative AI exemplified by ChatGPT and Dall-E with all their possibilities and shortcomings, has captured the attention of individuals, educators, businesses, and governments around the world. As IDC found in conversations with CIOs and IT leaders, the assessment and use of AI is starting to dominate the planning and long-term investment agendas of businesses across many industries, triggering what IDC anticipates will be a period of extending AI Everywhere.

The semi-good news for most CIOs and CTOs is that generative AI products and services are still limited in availability and will be relatively immature for the rest of 2023 and early 2024. Making hard decisions about commitments of significant treasure in a period of economic uncertainty will remain limited for all but a few organizations.

Every CIO and CTO, however, needs to start committing time and intellectual capital right now to ensure their organization is prepared, avoiding missteps and capitalizing quickly on the potential of AI across both IT and the business. The keys to ensuring your organization’s AI preparedness are assessing your level of AI awareness and determining your state of AI readiness.

AI Awareness: Take Stock and Aim for Consistency

Generative AI services like Jasper and Microsoft 365 Copilot, as well as all the buzz around foundation models, are the “bright shiny objects” right now. Everyone in the organization wants to talk about how they can transform everything from customer service to code and product design, but AI-enhanced capabilities in the areas of prediction (e.g., threat detection and digital twins) and interpretation (e.g., machine vision) are also likely to be well underway in selected parts of the organization.

Now is the time to conduct a comprehensive view of where in the organization AI initiatives of all types are underway. Asking, “What do we think AI can do and not do?” Use this effort to identify early areas where duplication threatens, or collaboration beckons. It can also help you identify gaps where business leaders may be missing critical opportunities because they are distracted by one shiny object.

A key next step is to develop a series of persona-based AI awareness education activities that span from the C-Suite and business/IT leaders to front line employees and even critical customers and partners. The goal isn’t to make everyone an AI expert. It’s to ensure that your organization is consistently “AI aware” as readiness assessments start, and commitment decisions are made.

AI Readiness: Focus on Cloud Native, Hybrid Cloud, and Control

As with many innovations, the ability to quickly adopt a transformational technology is determined by the existing level of technical sophistication and IT operational maturity of the organization. For example, companies that aggressively adopted virtualization as a technology for deploying and managing workloads on their own systems were able to more effectively adopt early public cloud infrastructure solutions that leveraged similar foundational technologies.

Cloud providers will play a significant role in the early introduction of generative AI enablement services and agile DevOps teams will play an equally important role in translating AI capabilities into useful business outcomes. Cloud pioneers and pacesetters with mature cloud operations and architecture models along with well managed DevOps processes will be better prepared to leverage AI than cloud laggards.

Beyond the cloud-native maturity noted above, IDC believes that companies with mature “hybrid-by-design” cloud strategies will be well positioned to take full advantage of AI innovation across many different cloud environments as well as across many different locations, core, and network to edge.

Now is also the time to ask, “Are we ready for AI Everywhere?” The key areas to conduct a critical AI readiness assessment will center on control. How consistent/inconsistent and open/siloed are data management and data use practices/guidelines? How standardized/fragmented and trustworthy/unreliable are code creation and life cycle systems and standards? How mature are FinOps and Cloud Cost Optimization practices?

Addressing the data questions will accelerate the need to address responsible AI governance and ethics with forethought and readiness. Mature cost and ROI tracking will be critical since “cost” will remain one of the most unpredictable elements of generative AI rollouts for the next several years.

The tech industry is thrilled by the possibilities of AI! This includes silicon designers, cloud providers, software and services clients, and even your own IT teams. The sense of anticipation and even giddiness is palatable, signaling a renewed focus on innovation as the driver of technology. Success, however, depends upon you having confidence in the ability to accurately track and link near and long-term costs with desired business benefits.

Cost/benefit readiness is the key skill required when it’s time to commit to AI, especially in this time of economic uncertainty and the threat of succumbing to IT malaise.