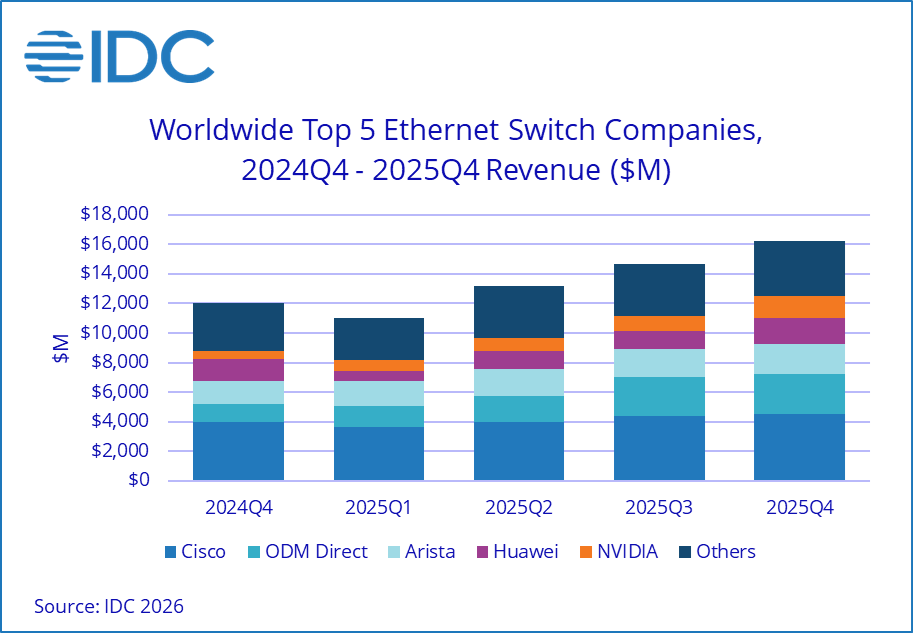

AI-driven infrastructure demand is accelerating investment in datacenter networking, reshaping the Ethernet switch market.

The datacenter portion of the Ethernet switch market continued its strong growth in the fourth quarter of 2025 (4Q25), rising 63.0% year over year (YoY) to reach $9.9 billion, driven by the build-out of datacenter network infrastructure to support AI workloads.

The total Ethernet switch market, inclusive of both datacenter and non-datacenter segments, grew 35.1% YoY to reach $16.2B in 4Q25. For the full year, revenue totaled $55.1 billion, up 31.5% YoY.

Ethernet switch market highlights

- Datacenter segment: The datacenter portion of the Ethernet switch market saw exceptional growth in 2025, with full-year growth of 53.5% YoY to reach $32.5B. High-speed datacenter switches (800G) accounted for 25.8% of 4Q25 revenues and 16.4% of full-year revenues, while 200Gb/400Gb speeds represented 43.9% of yearly revenue—reflecting rapid adoption of higher-speed networking to support AI workloads.

- Non-datacenter segment: Ethernet switches used in enterprise campus and branch networks grew 6.4% YoY in 4Q25 and 9.1% for the full year, reflecting steady investment in enterprise infrastructure.

- Regional performance: Ethernet switch revenues grew in all regions of the world in both 4Q25 and the full year. The Americas led with 45.4% YoY growth in 4Q25 and 40.2% for the year. EMEA posted 28.3% growth in 4Q25 and 23.1% for the year, while Asia Pacific saw 23.3% growth in 4Q25 and 23.5% for the year.

Router market highlights

The total router market, inclusive of both service provider and enterprise segments, rose 11.5% YoY in 4Q25 and increased 11.2% for the full year 2025 to reach $15.0B.

- Service provider segment: The service provider segment (including communications and cloud SPs) made up 74.4% of total router market revenues in 4Q25 and increased 12.8% YoY.

- Enterprise segment: The enterprise router market makes up the balance of revenues and grew 7.5% YoY in 4Q25, reflecting ongoing investment in enterprise wide area networking (WAN) connectivity.

- Regional performance: In 4Q25, the Americas router market rose 15.9% YoY, EMEA increased 16.2%, and APJ grew 3.8%.

Vendor highlights

Vendor performance reflects the shift toward AI-driven datacenter demand.

- Cisco: Cisco’s total Ethernet switch revenues increased 13.5% YoY in 4Q25 to $4.5 billion, capturing 27.6% market share. Non-datacenter segment revenues (63.9% of Cisco’s total) grew 7.5% YoY, while datacenter segment revenues rose 26.0% YoY. Cisco’s total router revenue increased 22.5% YoY, giving the company a 30.6% market share.

- Arista Networks: With 92.6% of its Ethernet switch revenues in the datacenter segment, Arista’s revenues grew 31.4% YoY in 4Q25 to $2.0 billion. Arista holds a 12.6% share of the total Ethernet switch market and 19% in the datacenter segment.

- Huawei: Huawei’s total Ethernet switch revenue increased 14.0% YoY in 4Q25 to $1.7 billion, giving the company a market share of 10.6%. Huawei’s router revenue increased 6.5% in 4Q25, giving the company a 30.2% market share.

- NVIDIA: NVIDIA’s Ethernet switch revenues, entirely from the datacenter segment, surged 192.6% YoY to $1.5 billion in 4Q25, giving it a 15.2% share of the datacenter segment.

- HPE: HPE’s total Ethernet switch revenue (66.8% from non-datacenter) increased 11.7% YoY in 4Q25, reaching a 6.7% market share. Following the July 2025 acquisition, HPE revenues now also include Juniper.

Market dynamics

- Demand for speed and low latency: Organizations are investing in higher-speed switches to support AI-driven and other demanding workloads, fueling growth in datacenter and high-speed segments.

- AI workloads: The proliferation of AI applications is pushing enterprises to upgrade their networks for both bandwidth and latency improvements.

- Global uncertainty (constraint): While macroeconomic and geopolitical uncertainty persists, it has not significantly dampened investment in critical network infrastructure.

“The 4Q25 results highlight the scale of the AI-driven boom in datacenter networking. Hyperscalers, cloud providers, and increasingly enterprises are turning the datacenter network into critical infrastructure, driving strong demand for high-speed Ethernet switching.” — Brandon Butler, Senior Research Manager, Network Infrastructure and Services, IDC

Why it maters

- Who should care? CIOs, network architects, IT buyers, and technology vendors should note this acceleration, as it signals a renewed investment cycle in network infrastructure.

- Business impact: Upgrading to more reliable and faster networks enables more responsive applications, enhances employee and customer experiences, and supports faster decision-making platforms.

- Ecosystem signal: The market’s robust growth highlights the strategic importance of network modernization, especially as organizations deploy AI and data-intensive applications.

What’s next for the Ethernet switch market

IDC expects continued momentum in the Ethernet switch market as enterprises prioritize network modernization to support AI, cloud, and real-time applications. Growth could accelerate further as AI investments increase, particularly in datacenter AI factories and as more inferencing use cases emerge. However, new supply chain concerns around memory and persistent global uncertainty are headwinds. Watch for ongoing investments in datacenter upgrades and higher-speed switch deployments in the coming quarters.

For deeper analysis, see the IDC Quarterly Ethernet Switch Tracker and IDC Quarterly Router Tracker.