Generative AI has wowed consumers and individuals across the globe with its ability to find information and author high-quality content. For enterprises, the use cases are still being explored and defined. In this blog, we will explore a potential ‘killer app’ for generative AI: The Virtual Mentor as a new way to do learning and onboarding.

In today’s organizations, the vast majority of mentoring is done by speaking to experienced colleagues, looking for answers in the public internet or in company-specific intranets, trawling through various PDF guides and presentations or maybe e-learning courses or classroom sessions. The problem is that there is no easy way of finding the information employees need using existing technologies and approaches.

Current e-learning and onboarding solutions struggle with multiple challenges. Firstly, the content is costly and time-consuming to produce. Secondly, it quickly becomes outdated and is generally static, once produced. Thirdly, the one-size-fits-all standard approach to learning and onboarding doesn’t quite meet the needs of the individual, who already knows all about A but would like to deep-dive into B.

We believe that generative AI will be a game changer in solving these problems, because the system themselves – for the first time in world history – can generate the needed learning content. Future virtual mentors will meet many of today’s unserved learning and onboarding needs and employee would be able to interact digitally, remotely or in the office, intensively or in drip-feed style, and the learning content would be created on the fly determined largely by the nature of the interaction and the learner queries.

AI-Powered Virtual Mentor vs. Previous Learning Approaches

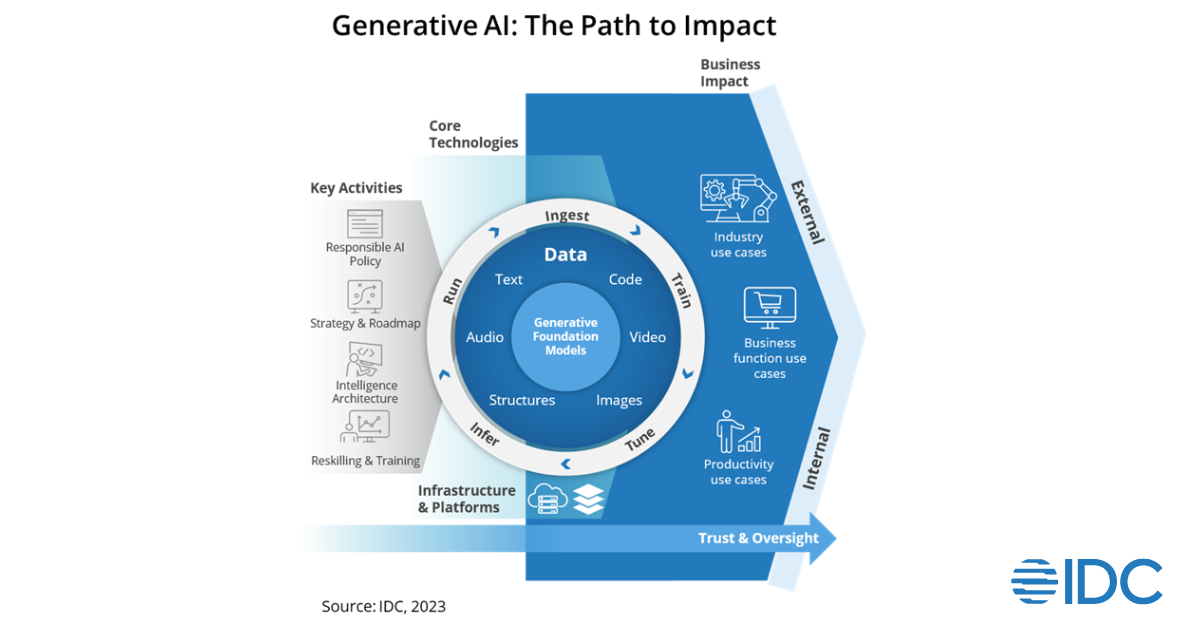

First of all, let’s define generative AI. We define generative AI as a branch of computer science that involves unsupervised and semi-supervised algorithms that enable computers to create new content using previously created content, such as text, audio, video, images and code.

Secondly, let’s define what an AI-powered virtual mentor is. We envision the AI-powered mentor is have the following characteristics:

- Always available. Like Microsoft’s failed personal digital assistant Clippy (remember the animated talking paperclip?), a virtual mentor will be an omni-available resource to the learner.

- Creates content itself. If fed enough material, a generative AI-powered virtual mentor will be able to create the relevant teaching material itself by synthesizing existing content.

- Conversational. Just like a real-life, human mentor, the AI-powered virtual mentor interacts via conversation. The human mentor converses verbally, while the virtual mentor works best via written conversation (although verbal user experience is on its way, as well).

- Adaptive. A virtual mentor goes far beyond what is known today as ‘adaptive learning’, I.e., an e-learning experience with some variation in the course depending on the individual learner. A virtual mentor can freestyle and go where the learner would like to go within a general topic area.

An employee would be able to ask a wide variety of general questions to the virtual mentor, such as:

- What is the pricing structure for product X?

- Do we have representation in Peru?

- What are the key new features in the version YY.YYY of product Z?

- What is the expense management policy for a client meeting?

- Who in my company works with [expertise area]?

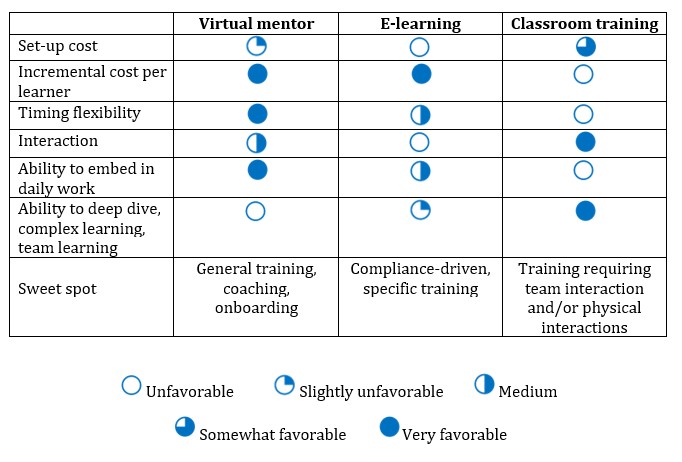

Let’s compare what it is like to work with a generative AI-powered virtual mentor compared to traditional e-learning as well as classroom training:

Why Do We Need Virtual Mentors When We Already Have ChatGPT and Similar Generative AI Platforms?

ChatGPT is of limited use in an enterprise context for one simple reason: Employees using the platform are likely to reveal sensitive company information. This is why most organizations have banned the use of ChatGPT among employees.

Just imagine an employee at a healthcare provider uploaded the raw transcript of an internal meeting regarding the cancer treatment of patient XX and asking for an abbreviated minute of meeting. Such an upload to a public internet system would constitute a major violation of the privacy of patient XX.

Virtual mentors, on the other hand, would leverage the public internet-based Large Learning Models but would not feed any inquiries from employees back to the public internet. Such ChatGPT replicas in confined corporate setting will be the first wave of generative AI virtual mentors that we are going to see on the market.

This will, in other words, be general purpose virtual mentors based upon public internet information. These can be adopted by organizations of any size and are ready to use immediately.

A subsequent wave of virtual mentors will be based on curated content specific to a functional area or an industry or similar. Such specialized content virtual mentors will be sold by vendors that are in charge of curating content and maintaining the AI solution.

A virtual mentor in the area of accounting could be offered by learning content provider or alternatively to an accounting solution provider. Some specialized virtual mentors could be provided as free add-ons to commercial software subscriptions.

Finally, we will see a wave of organization-specific virtual mentors that will act as experts in one organization. In this case, the organization itself would be in charge – possibly aided by a services provider – of feeding the system with learning material.

A product manufacturer would input all manuals, product FAQs, marketing material, customer service interactions, HR policies, internal communication, public pricing information, everything on the intranet and company internet sites, training materials, etc. That solution could be very helpful in onboarding new employees and help answering inquiries for existing employees. However, it would take time and resources to implement and require a certain company size in order to benefit.

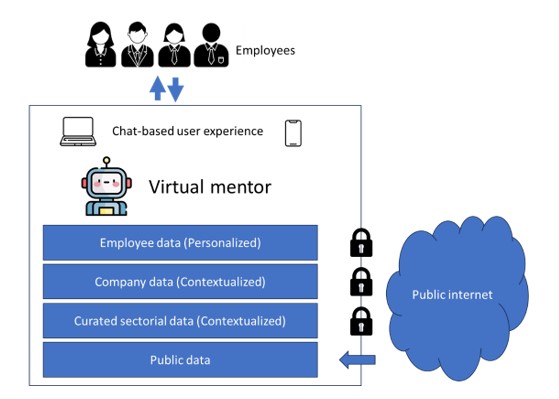

The figure below shows the different levels of data feeding into a virtual mentor. The interaction between the virtual mentor and the employee will be chat-based to begin with. However, in the medium term, interaction could also be done through verbal communication, games, metaverses, augmented reality, etc.

Evidence of Generative AI Replacing Existing Digital Learning and Coaching Solutions

Chegg, an established American education technology (EdTech) company known for textbook rentals, online tutoring, and a variety of student services, was among the entities to feel the competition from generative AI. Their initial projection regarding generative AI tools, such as ChatGPT, was that these technologies would take a longer period to truly influence the market.

However, the release and subsequent popularity of GPT-4 among students, credited to its swift response time, efficiency, and affordability, led to a sales slowdown and a dramatic Chegg stock price decline of 48% in early May 2023.

As response to these trends, Chegg entered into a partnership with OpenAI in April 2023, leading to the development of CheggMate. This tool, which is still in its development phase, intends to amalgamate GPT-4’s generative AI capabilities with Chegg’s existing question database.

The goal for CheggMate is to enhance user experience by better aligning user queries with the most suitable resources.

Other EdTech vendors, including Duolingo, have unveiled new AI-driven features. Specifically, Duolingo introduced a role-play chat where users can learn a language by conversing with an AI. After these interactions, they receive feedback and suggestions to enhance their language-learning journey.

We have also witnessed the first examples of generative AI approaches in mentoring. CoachHub is a leading vendor of digital coaching solutions recently unveiled AIMY, a virtual AI-powered career coach rooted in OpenAI’s ChatGPT. AIMY is designed to let users try personalized coaching sessions without any human interactions and without the costs associated with traditional coaching. It emulates human to human coaching, is still in beta phase, and not yet able to manage too complex discussions.

Challenges to Overcome for Virtual Mentor Solutions

Adopting virtual mentor solutions for learning, onboarding, and coaching purposes is not without challenges. Here are a few key obstacles that organizations might encounter when introducing these new AI-driven solutions:

- Data privacy and security concerns. The first cases of data breaches related to the use of generative AI solutions by employees have already emerged, such as Samsung’s discovery of staff uploading a variety of sensitive information to ChatGPT. Future virtual mentor solutions will not feedback data to public generative AI systems, such as ChatGPT.

As shown in the figure above, virtual mentors will use a combination of user data, curated company data, curated industry or functionally specific data as well as publicly available data as training material. Such approaches will limit the risk of data breaches significantly.

However, adoption will require significant attention to security-related aspects, such as ensuring robust encryption, compliance with data protection regulations, etc.

- Implementation complexity and skills gap. Introducing virtual mentor solutions on top of existing data is likely to require specialist AI training skills, which might not be in possession of many organizations. In terms of the overview figure above, the company-specific layer presents the biggest challenges. This is because training material is limited (compared to the vast number of resources available on the public internet) and because training material must be curated, updated, deleted (in case of obsolete material), etc.

- Risk of hallucinations. AI-driven virtual mentors can produce “hallucinations” or inaccurate answers. In a mentoring context, this can lead to confusion or misguidance and ultimately a rejection of the mentor system as unreliable by the employees. The risk of hallucinations by the virtual mentor means that organizations will have to dedicate resources to quality assurance, ticketing system for incorrect or inappropriate answers, etc.

Implications for HCM and Payroll Vendors

Generative AI will have a major impact on the field of Human Capital Management solutions. There has been a significant initial focus on the impact of generative AI on recruiting, candidate marketing, and employee performance.

However, learning and onboarding will also see massive change as a result of generative AI.

A market for curation of Large Learning Models for various industries and functional areas will appear. This could open new revenue streams for the providers with strong existing domain knowledge.

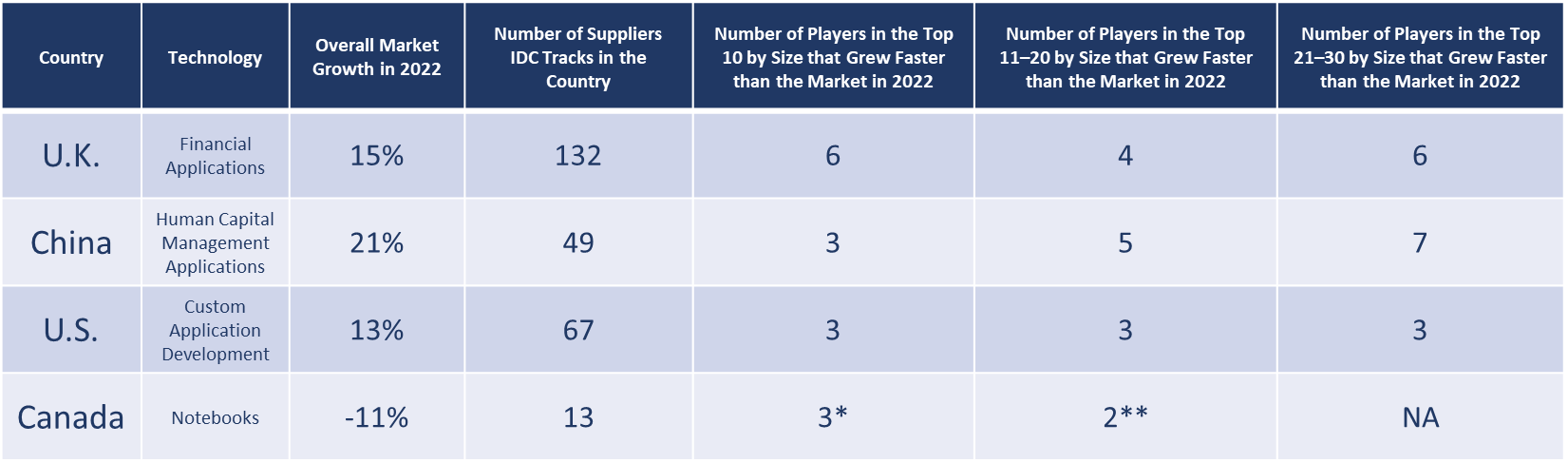

As displayed on the table above, different learning delivery methods will have different sweet spots. Classroom-based learning and traditional e-learning formats will not disappear.

What will happen, however, is that a lot of the more general learning and onboarding tasks will transition to generative AI-based learning formats. Initially, the formats will evolve around chat-based interfaces, but over time other user experiences and communication formats will emerge.

Generative AI is an opportunity for vendors of learning and onboarding solutions. However, they will need to react fast in terms of evolving existing solutions and building in generative AI features and aspects.

Existing learning and onboarding vendors will come under pressure from new providers of virtual mentors and other related generative AI-based solutions. Generative AI is a twin edged sword for HCM vendors, a blessing for those who are willing revisit their existing offerings, but a curse for those that fail to respond.