Globally, cities are rediscovering the importance of their rivers as a central tenet of the health, wellbeing, and economy of a city. A river was often, if not always, the reason for a city to develop and grow, but during the 20th century city, authorities began to focus primarily on the built environment and to see water management as a less important sub issue of city management.

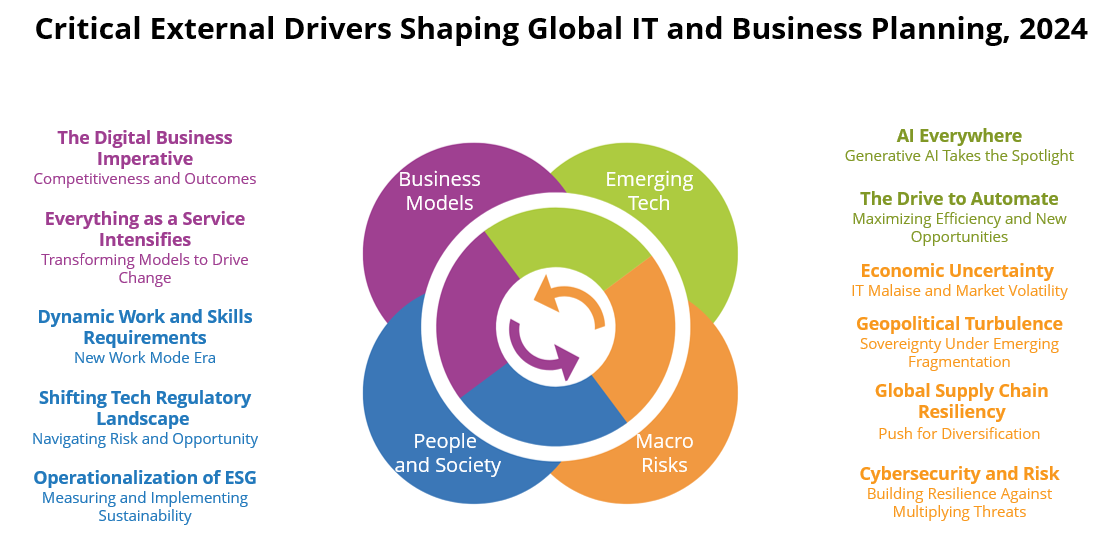

With the rise of environmental awareness in the 21st century, cities are beginning to relook at the interrelationship between the built environment and their rivers. We have been tracking this new direction through their research on River Cities and how technology now allows us to instrument both water and the built environment in concert.

According to our research, 28% of local governments across EMEA are already investing in smart rivers with an additional 29% considering investing in the future (IDC Survey, December 2023).

The French are Reclaiming their Rivers

France is emerging as a leader in this process, and the clearest example of this will be the opening ceremony of the 2024 Olympic Games.

Paris is the most visited city in the world, and it is impossible to imagine Paris without picturing the Seine. Olympic opening ceremonies are historically held in a stadium, but France will be using the banks of the Seine for the ceremony to increase participation and celebrate the special relationship between the river and the city.

The Digital Twin Project

A further French example of this new thinking is captured in a recently published IDC Perspective Building a River Digital Twin: A Case Study of the Port de Bordeaux. This document provides an overview of a project led by the Port de Bordeaux, an entity managing marine activities across Bordeaux and the Gironde Estuary.

The objective of the project is to create a digital twin of the Estuary – the largest Estuary in Western Europe covering around 635 km2. The Gironde Estuary is formed from the meeting of the rivers of Dordogne and Garonne and spans several cities, the main one being Bordeaux with more than 250k residents. The Port de Bordeaux manages 7 terminals is the 7th largest French port in terms of traffic.

The digital twin was built to help project participants in both their day-day tactical decision-making process (for example, information on water levels, pollution and navigation) as well as addressing longer-term and strategic challenges (adaptation and impacts of climate change). The Port de Bordeaux developed 8 core goals for the digital twin project:

- Sharing and developing knowledge of the river.

- Promoting the exchange of data and operational results.

- Anticipating the effects of climate change.

- Identifying mitigation solutions.

- Developing economic, recreational and tourism opportunities.

- Preserving biodiversity and environmental wealth.

- Developing coastal and river surveillance (alert systems).

- Fostering replicability of the platform on other rivers.

An innovative aspect of this project is that the project team looked beyond environmental challenges to a broader set of objectives, including, for example, economic and recreational activities. This approach is centred on the view of a river as a complex ecosystem of different stakeholders and an integral part of the identity of the region. The project has a wide target audience, and the use cases, outputs and goals were co-created with the relevant stakeholders at the design stage.

Digital twins are at an early stage of adoption for rivers and marine environments. However, the application of technologies to the blue economy is increasing.

We predict that, by 2027, threatened by water scarcity and extreme weather, 40% of large cities will have digital twins of their water resources to manage water supply, quality, resilience and behavioural change (IDC Smart Cities and Communities 2023 Futurescape).

The Port de Bordeaux is an early adopter and can provide a model and blueprint for others to follow as a core principle of the project was making as many aspects as possible open source. Local stakeholders can upload their own data and use the GIROS platform to visualize their results. This supports a broader community of users being able to take advantage of the model.

Crucially, the Bordeaux project team intentionally designed the solution to be replicable on other rivers; while the numerical model for the Gironde is geographically specific, the framework and architecture of the solution is being made publicly available.

We are soon to publish our first Tech Innovator report on companies involved in rivers and water management and are keen to hear of innovative technology solutions and case studies involving river management for future reports so please do get in touch with us jdignan@idc.com, lbarker@idc.com, rletemple@idc.com