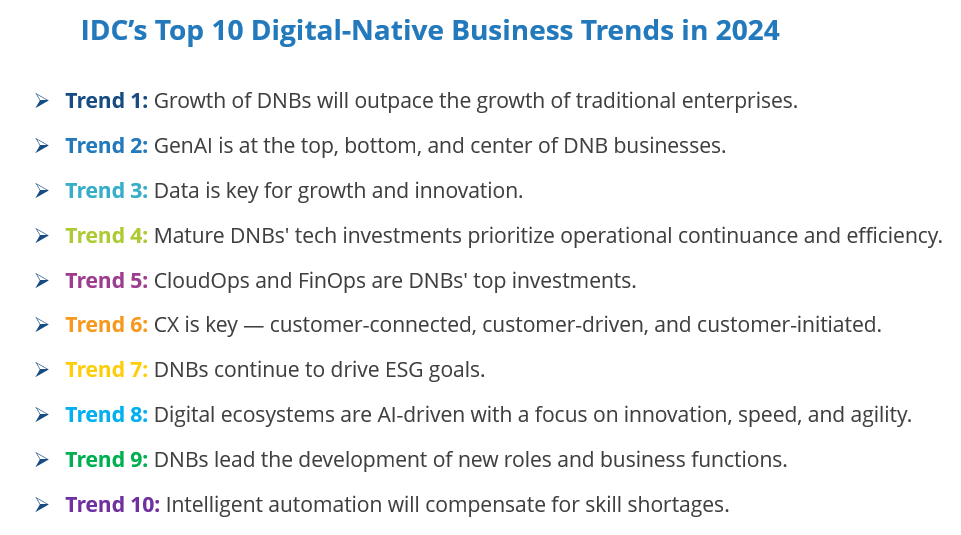

The EU’s new Corporate Sustainability Reporting Directive (CSRD) has thrown a chill on the business processes of organizations: Companies must modernize their applications and data foundations to enhance their reporting capabilities.

The struggle of companies in Europe to comply with the CSRD was on display at the ChangeNOW global summit, held in Paris at the end of March. Participants at the event — which seeks to map sustainable initiatives, best practices, tools, and technologies — revealed that organizations are lagging when it comes to implementing CSRD.

This is in line with results of IDC’s recent European IT Services Survey (N = 700), which found that just 25.6% of European organizations expect to deploy tech to improve sustainability KPIs as a transformation initiative in the next two years.

The CSRD is having a huge impact on organizations: It imposes reporting standards that compel organizations to publish their ESG information, which must then be verified and audited. All industrial sectors, from large accounts to SMBs, are subject to a staggered compliance timetable: The first reports must be published between 2025 and 2026 for large accounts, and in 2027 for SMBs.

Everyone agrees on one point: It’s a race. The timetable is forcing the acceleration of activities in data collection and qualification, methodologies and best practices, to structure and industrialize the creation of these reports.

CSRD weighs heavily at all levels of organizations. It requires a review of business processes and the organizational model, and, therefore, the modernization of core business applications — where the data is. New platforms or custom developments may need to be deployed to consolidate ESG data.

After examining their data lakes and the shift toward new data architectures, many businesses perceive this as a transformational endeavor.

Like any IT project, such complexity brings opportunities for services providers to support organizations with compliance. IDC surveys have shown that 41.2% of organizations expect partners to play a key role in implementing their sustainability strategy and achieving their objectives.

The Scaling Problem of Legacy Finance

Let’s examine where CSRD creates a bottleneck. Among the processes impacted by the CSRD is that of the finance department. Today, the CFO is one of the guardians of the transformation of the finance function, whose scope has been extended to non-financial matters and CSR.

For example, the French bank Crédit Agricole and cosmetics specialist L’Oréal have entrusted the finance department with their CSRD projects. Experienced in standardized financial reporting, the CFO has the difficult task of reproducing and improving processes by integrating CSRD.

Logical, but still difficult to implement. One of the biggest challenges is getting the different personas impacted by CSRD — and the associated data — to sit at the same table to find the right communication channel and vocabulary to communicate.

These human interconnections represent a real challenge in terms of governance but are necessary to deploy an application modernization strategy and convert the new operational model and business processes into a revitalized IT structure.

Financial IT systems are often very mature. CSRD requires them to scale rapidly to support new workloads in only three years. This includes related data initiatives: the mapping of data sets, the overcoming of information silos, increasing automation, and supporting heterogeneous files (PDF or Excel, for the most part).

The legacy must be modernized within the timeframe of the CSRD. But urgency means risks must be controlled. For example, misunderstanding the regulation and the requested data could have a negative impact on technological engagements and procurement.

Using GenAI to modernize legacy applications and make them “CSRD ready” has been explored to collect, map, and consolidate data, generate appropriate information for criteria, or automate the storytelling inside the CSRD reports.

Capgemini has detailed how GenAI could accelerate gap analysis and identify which data is lacking and which data is relevant for presentation. L’Oréal discussed how it believes that GenAI is key to education and acculturation on the criteria and wording of the regulation.

This scenario is in line with our vision for application modernization strategies in Europe.

The implementation of the CSRD — and, by extension, the major theme of sustainability — represents a powerful driver for adapting processes, revitalizing part of the application estate, and establishing a coherent link between IT and new business requirements.

Revitalizing applications to optimize business processes is a key theme of IDC’s European Application Modernization Strategies research program.

Modernize with a Sustainability/ESG Integration Platform

The challenges include making the regulation a starting point for a more global strategy, and placing CSRD and sustainability at the center of the organization’s decision-making and business innovation.

We believe this requires building an enterprise architecture, including modular and loosely coupled components, to integrate systems, applications, and data in a flexible and sustainable way over time.

Such a sustainable integration platform will de-silo business applications, facilitate the continuous collection of data, the industrialization of analytical reporting, and the connection to ecosystems. In short, it means building a dynamic CSR link in the value chain and anticipating the evolution of reporting obligations.