We are a very inquisitive species with a remarkable long-term record of adaptation and with even more remarkable recent accomplishments in making the lives of most of the world’s population healthier, richer, safer, and longer. Still, fundamental constraints persist: We have changed some of them through our ingenuity, but such adjustments have their own limits.

— Vaclav Smil, How the World Really Works (2022)

The industry sector needs resources more than ever, particularly rare minerals. Even as the hunt for such resources intensifies, the industry is pushing to achieve sustainable growth and meet new environmental, social, and governance (ESG) goals.

According to the Copper Alliance, renewable energy systems require up to 12x more copper than traditional energy systems. Copper demand is expected to increase nearly 600% by 2030.

Renault’s Chairman Jean-Dominique Senard told Reuters news agency: “If there’s a real geopolitical crisis, the damage to battery factories solely powered by products coming from outside will be considerable.”

According to the UN’s Intergovernmental Panel on Climate Change, reducing industry’s greenhouse gas (GHG) emissions requires coordinated action across value chains. Such action includes circular material flows and transformational changes in production processes.

The manufacturing industry remains at the forefront of efforts to reduce the impacts of extracting natural resources and to secure materials that enable low-carbon production. But to meet these and other challenges, organizations must continue to find efficient ways to transform their value chains into closed-loop flows of the basic materials needed to extend product lifetimes. And they should double down on their recycling programs by finding ways to turn materials from end-of-life products into completely new products.

A series of game changers have been pushing organizations to be more efficient with resources and to adopt the principles of the circular economy. These include:

- Organizations have been adopting sustainability policies that call for them to reduce their carbon footprints to at least net zero.

- The massive spread of electromobility has turned the EV battery business, and the rare minerals needed for such batteries, into critical assets.

- The COVID-19 crisis showed that it can be risky to depend on third parties to transport strategic materials around the world.

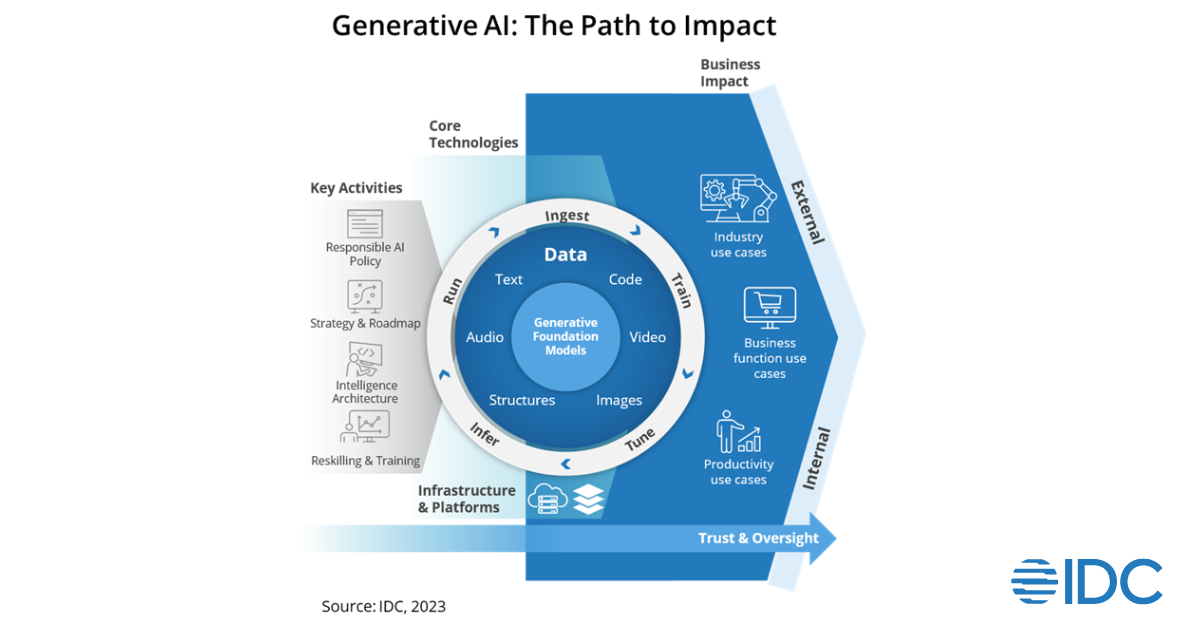

- Digital technology has developed significantly in the past three years, especially in terms of cloud-based digital platforms and IT infrastructure, artificial intelligence-powered digital tools, and generative AI engines.

Manufacturing organizations, at least in theory, are in an ideal position to make circular principles inseparable from operations. Operationalizing circular principles at scale, however, remains one of the biggest challenges for managers across lines of business and industries.

In IDC’s 2022 global survey of 1,300+ manufacturing organizations, 58% of respondents said they have already incorporated circular economy principles into operations including design and production processes, waste reuse, and local sourcing of resources. Two-fifths (43%) of respondents said that shrinking carbon emissions and their CO2 footprints are key elements of achieving their ESG/sustainability strategic business goals. Two-fifths (41%) of respondents also cited the goals of reducing waste and driving cost efficiencies.

Reduced carbon production, as well as cost reductions driven by the optimized use of materials, labor, and assets, are some of the benefits organizations are receiving after adopting circular economy principles.

The auto industry is pioneering circularity principles in operations, particularly in the area of EV and EV battery production.

- In 2022, General Motors announced an initiative to recover and reuse the raw material in its Ultium battery packs, thus driving down costs and making the manufacturer’s EVs even more sustainable.

- Stellantis established a Circular Economy Business Unit whose objective is to “extend the life of vehicles and parts, ensuring that they last for as long as possible, and returning material and end-of-life vehicles to the manufacturing loop for new vehicles and products.” According to the company’s website, multi-brand parts that are still in good condition are recovered from end-of-life vehicles and sold in 155 countries through the B-Partsecommerce platform.

- Renault’s “The Future Is NEUTRAL” entity aims to scale the closed-loop automotive circular economy, with the aim of moving the automotive industry toward resource neutrality.

These are all great initiatives that seek to improve material resiliency, make more efficient use of resources across the value chain, slow the impacts of climate change, and deliver sustainable profit and increased customer trust.

Download eBook: Sustainability in EMEA: Opportunities for Tech Vendors, Challenges for Tech Buyers

Operational Challenges

The following is a brief rundown of the operational challenges that organizations must tackle to reach a meaningful level of profitable circularity.

- Fragmented Approach: Many organizations lack a clear, unified strategy and circular principles are thus applied opportunistically, mostly in production areas where the effort can bring immediate benefits or solves obvious issues.

- Logistics: Many organizations struggle with insufficient production infrastructure and related logistics. Applying remanufacturing and repair to current operational setups significantly reduces overall efficiency during production, warehousing, and delivery processes.

- Transparency and Flexibility: Implementation of circular principles in operations requires absolute transparency, traceability, and operational flexibility. To secure circular principles during the entire life cycle of the product, data related to the product’s usage must be captured and shared in real time in an autonomous, touchless way.

Faced with these challenges, a digital thread — a closed loop between the physical product and its digital representative — can provide relevant feedback to the product’s lifetime stakeholders. To make such data flows reality, however, several technology elements must converge, including ubiquitous connectivity, IoT, digital twins, and data capturing and sharing via cloud-based digital platforms.

Detailed transparency requires seamless integration of enterprise software. Examples of such systems include product life-cycle management, bills of material hierarchy, enterprise resource planning with remanufacturing functionality, logistics management, manufacturing management platforms, and servicing platforms.

Up-front costs and investments can be significant barriers to circularity. Achieving meaningful impact at scale requires coordination across functions and the involvement of various stakeholders inside and even outside of the company.

Suppliers, reverse logistics providers, remanufacturing and repair centers, customers, and technology partners must be coordinated into a perfectly synchronized machine. A circular environment is far more complex than traditional chains. Organizations may be challenged to create a business case with a short ROI.

Organizations must also determine whether circular principles can be applied to a product that is already in production — or if circular product design and management should instead be implemented only for new products, at the beginning of their life cycles.

Going “circular native,” as I term this last option, was very important to 46%, and extremely important for 38%, of respondents to an IDC Manufacturing Insights survey. “Circular native” is not defined by materials or extended life cycles but by a connection via digital thread to data sharing across a product’s entire lifetime.

The operationalization of circularity requires solid collaboration among procurement, engineering, and supply chain managers, especially during the design and supplier selection process.

It must also be acknowledged that the complexity of supply chains can make it challenging to establish closed-loop systems. Collaboration and coordination among suppliers, customers, and other partners are necessary for efficient material flows. And resource recovery must be underpinned by digital technology (e.g., cloud-based supply chain control towers).

Register for the webcast: Sustainability in EMEA: The Challenge of Moving from Ambition to Action

Boiling the Ocean?

For some leaders, embedding circularity principles in manufacturing operations — including reengineering product specifications according to circular principles — may feel a bit like “boiling the ocean,” or undertaking a seemingly impossible or unnecessarily difficult task.

Yet there are a great many benefits to providing data on technology processes and supply chains to stakeholders in real time. Products connected via digital thread to closed-loop stakeholders can help organizations better manage the product’s life-cycle bill of materials, collect data to improve the next generation of the product, and contextualize product data with current point-of-use data to provide a complex view of the product’s life-cycle status.

To achieve circular economy success, circular principles must be embedded across the entire product life cycle, including packaging. And the digital twin of the product must be integrated with a cloud data platform.

Circularity is not just about utilizing sustainable and recyclable materials: Life extension is a significant element. The most sustainable material is one that doesn’t need to be processed. Repair and remanufacturing are thus integral steps of the product life cycle.

Circularity also requires investments in digital tools capable of handling manufacturing processes in which input and output indicators may not always be well defined. Manufacturers that tackle this challenge should consider dedicated software enhanced with features like reverse bills of material, disassembly, expected recovery and kitting, remanufactured parts management, and remanufacturing pricing with core changes.

Data and contextualized life-cycle information, including carbon emissions, is a real enabler of the optimization of circularity principles in the manufacturing and supply chain environment.

In today’s hyperconnected world, moving from fascination with, to visualization, to implementation of circular principles isn’t viable without reliable and secure digital infrastructure, relevant digital tools, and AI-powered technology.

Bottom line: When it comes to securing material resiliency and achieving ESG goals, there is no time for hesitation or inertia!

To find out more about manufacturing visit our website, or to find out more about the framework-based guidance on how manufacturers can develop and deploy circular principles in their operations, click here.